Agents now operate inside operations, interfacing with customers, and across organizational boundaries. D&O insurance was built for a world where humans made the decisions and boards reviewed them annually. That world is over. Four institutional markets are now pricing AI governance, each through a different mechanism. The boards that build continuous governance will earn better D&O terms. The boards that don't will pay for the gap.

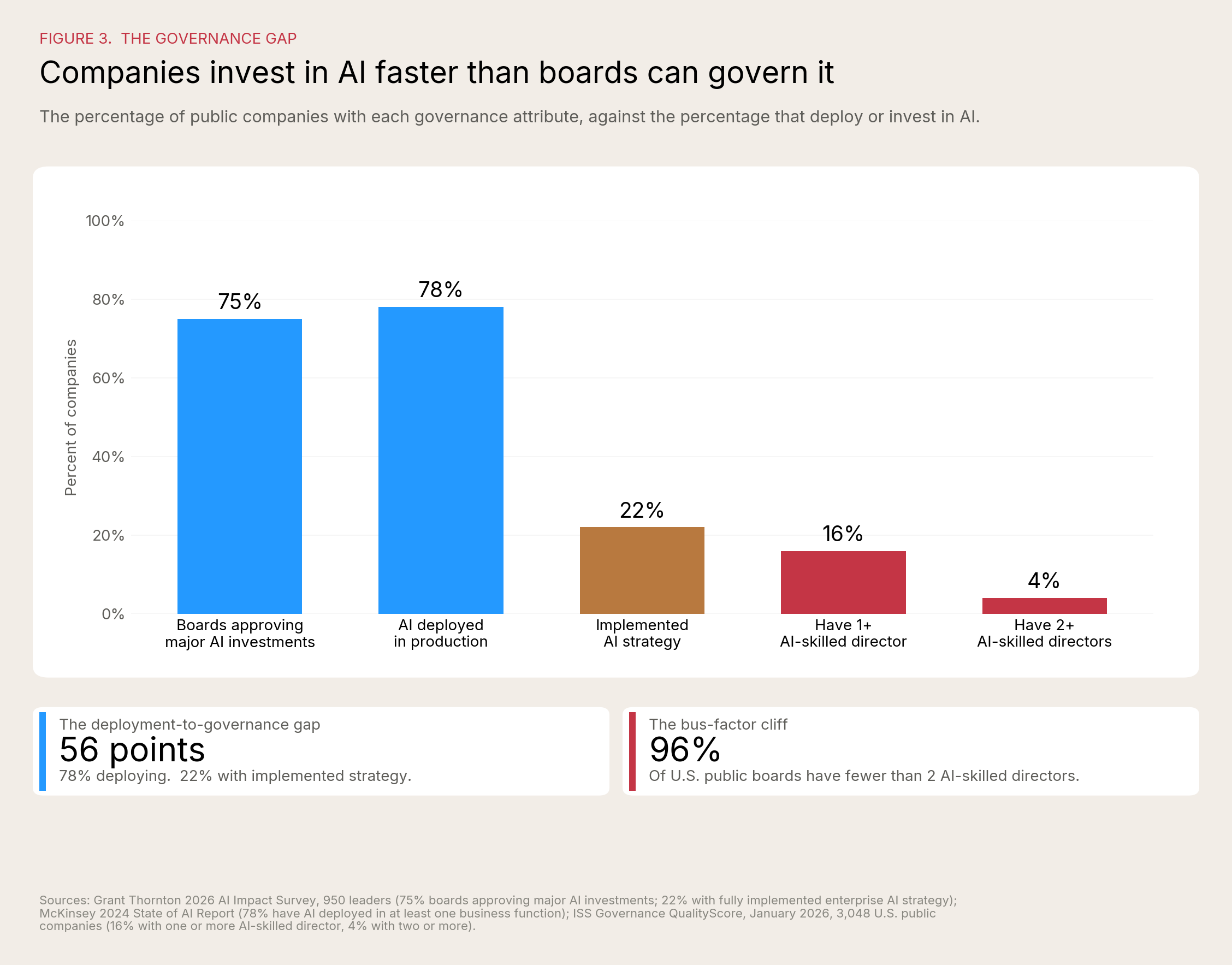

Seventy-eight percent of business executives lack strong confidence that they could pass an independent AI governance audit within 90 days. That figure, from Grant Thornton's 2026 AI Impact Survey of 950 leaders across ten industries, is the most recent example of what practitioners now call the AI proof gap. It is also the number directors should bring to their next renewal meeting.

"Leaders who have invested in governance aren't moving slower. They're moving faster because they have the confidence to scale. The ones who haven't built it yet are one incident away from a much harder conversation." - Tom Puthiyamadam, Managing Partner, Grant Thornton Advisors LLC

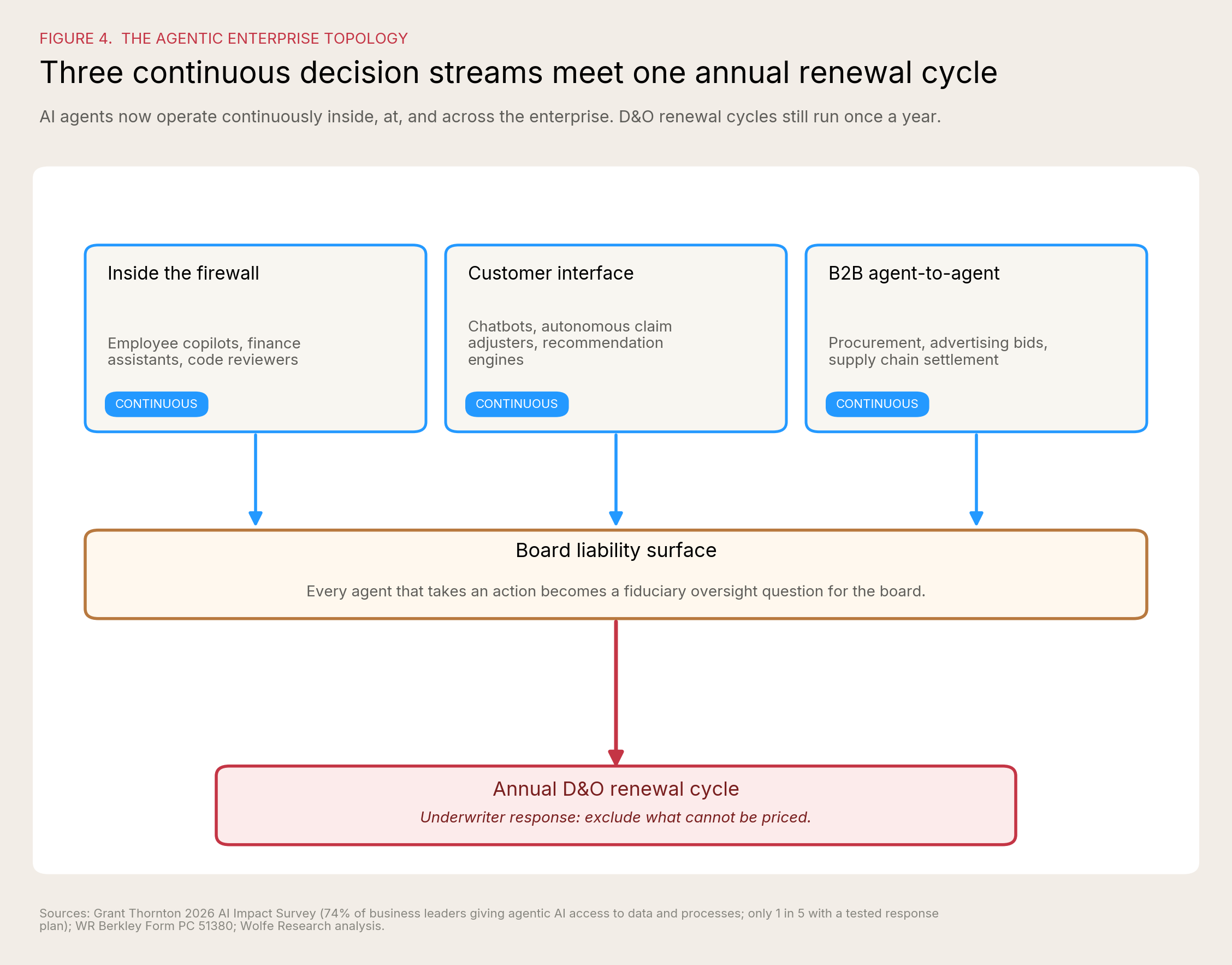

The agentic enterprise has arrived. AI agents now operate inside the firewall as employee copilots, finance assistants, and code reviewers. They operate at the customer interface as chatbots, autonomous claim adjusters, and recommendation engines. A growing number transact with other agents across organizational boundaries, handling procurement, advertising bids, and supply chain settlement without human review. According to Grant Thornton, 74% of business leaders have given agentic AI access to their data and processes. Only one in five has a tested response plan for when something goes wrong.

This helps the case for Continuous Governance of the Agentic Enterprise: the only governance topology that matches the technology topology. Quarterly board cycles cannot oversee agents that take ten thousand actions a minute. Annual D&O renewal cycles cannot price exposure that compounds in real time. The mismatch is structural, and the people who saw it first were the underwriters.

The good news for boards is that the underwriters' response is also the opening for boards that govern well. Latham & Watkins reported in August 2025 that the D&O insurance market remains favorable to insureds, with many carriers competing for placements and willing to negotiate expanded coverage and policy enhancements. Strong AI governance is becoming a credit factor in that negotiation, the same way SOC 2 attestation became a credit factor in cyber underwriting fifteen years ago. The boards that document continuous oversight first will pay less for D&O, get cleaner terms at renewal, and build a defensible record for whatever litigation arrives next.

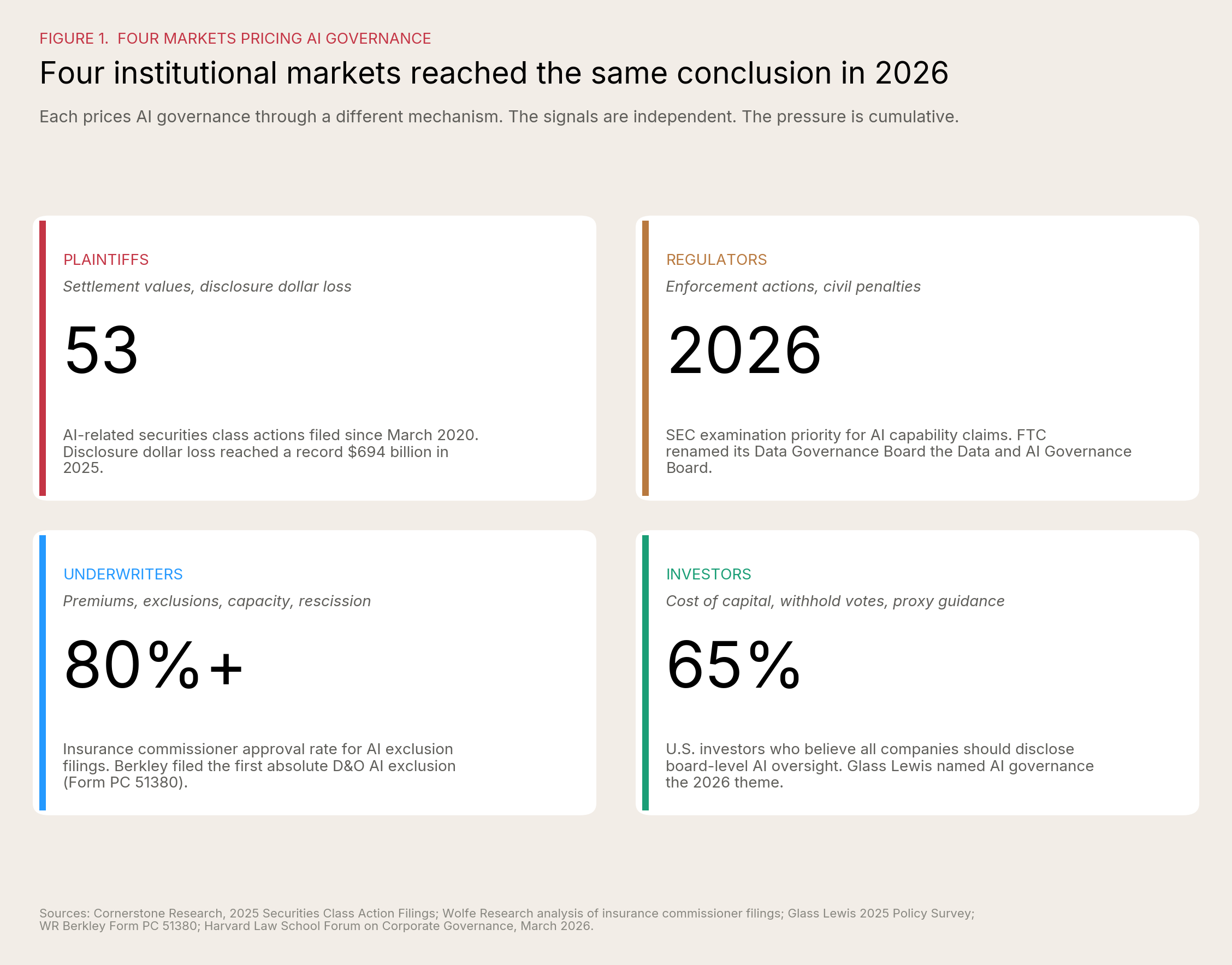

Four markets are pricing AI governance, each through a different mechanism

Each of these markets prices AI governance through a different mechanism, and that is exactly what makes the convergence consequential. Plaintiffs price it through settlement values and disclosure dollar loss. Regulators price it through enforcement actions, civil penalties, and the personal liability that attaches when officers signed off on what they could not see. D&O underwriters price it through premiums, exclusions, capacity, and in some cases policy rescission. Institutional investors price it through cost of capital, withhold votes against governance committee chairs, and the proxy advisor recommendations that compound across cycles. The signals are independent. The pressure is cumulative. Boards that have built credible AI governance are now being priced differently across all four.

The plaintiffs' bar. More than fifty AI-related securities class actions have been filed since March 2020. Through the first half of 2025, AI was the largest single category of event-driven securities class actions, exceeding crypto, COVID, cybersecurity, and SPACs individually. Disclosure dollar loss on securities filings hit a record $694 billion in 2025 according to Cornerstone Research. The legal pattern is consistent: plaintiffs do not need to prove the AI failed, they need to prove the board failed to govern it. The price here is the settlement value, plus the disclosure-dollar-loss multiplier on whatever stock decline accompanies it.

Regulators. The Securities and Exchange Commission made AI capability claims an examination priority for 2026. SEC actions against Presto Automation and two registered investment advisors established that "AI-washing" is now a category of enforcement. The Department of Justice has filed criminal charges in adjacent cases. The Federal Trade Commission renamed its Data Governance Board the Data and AI Governance Board. The price here is enforcement: investigations, civil penalties, and the personal liability that attaches to officers who signed disclosures they could not defend.

D&O underwriters. WR Berkley (NYSE: WRB) filed Form PC 51380, the industry's first absolute AI exclusion. ISO Form CG 40 47, the standard commercial general liability endorsement excluding generative AI, took effect January 1, 2026. AIG (NYSE: AIG), Chubb (NYSE: CB), Travelers (NYSE: TRV), Berkshire Hathaway (NYSE: BRK.A), and Hamilton Insurance Group (NYSE: HG) are filing similar language across multiple lines. Wolfe Research analysis found that insurance commissioners have approved more than 80% of AI exclusion requests. The price here is the renewal: premium, exclusions, capacity, and in some cases rescission.

Institutional investors. Glass Lewis identified AI governance as the defining theme of the 2026 proxy season. Sixty-five percent of U.S. investors now believe all companies should provide clear board-level AI oversight disclosure. Forty-nine percent believe oversight should be codified in committee charters. Among S&P 100 companies, just over half disclose board-level AI oversight, and fewer than one in three disclose both an oversight structure and a formal AI policy. JPMorgan Chase has announced that beginning with the 2026 proxy season, it will use an internal AI platform called Proxy IQ to analyze proxy data from approximately three thousand annual meetings. Glass Lewis has announced that by 2027 it will transition to AI-enabled voting frameworks calibrated to individual client strategies. The institutions evaluating board AI governance are now using AI to do the evaluation. The price here is cost of capital, withhold votes, and the proxy advisor recommendation that follows the board into next year's renewal cycle.

A signal worth naming inside the data: 46% of executives cite governance and compliance as the leading cause of AI underperformance, the single largest factor by a wide margin. Only 11% identify risk and compliance as the function needing the most focus to meet their AI ambitions. Knowing what is failing and refusing to fund the solution is exactly the kind of organizational paradox boards exist to override. The C-suite is not going to override it on its own.

That is one side of the story. The other side is that the same carriers writing exclusions are also developing affirmative AI coverage, expanding insured-person definitions, and offering preferred terms to companies that can document oversight. Aon (NYSE: AON) has built a framework for augmenting Loss and Insured Person definitions to preserve AI-related coverage. Allianz published a 2026 D&O Insights report explicitly positioning AI governance as a pricing input. Specialty AI insurers including Munich Re, Armilla, Chaucer, Testudo, and Artificial Intelligence Underwriting Company are writing standalone policies for companies that can pass governance diligence. The market is not closing. It is segmenting. Boards that document continuous governance oversight will increasingly find themselves on the favorable side of the segmentation.

Two coverage risks a board should be honest about

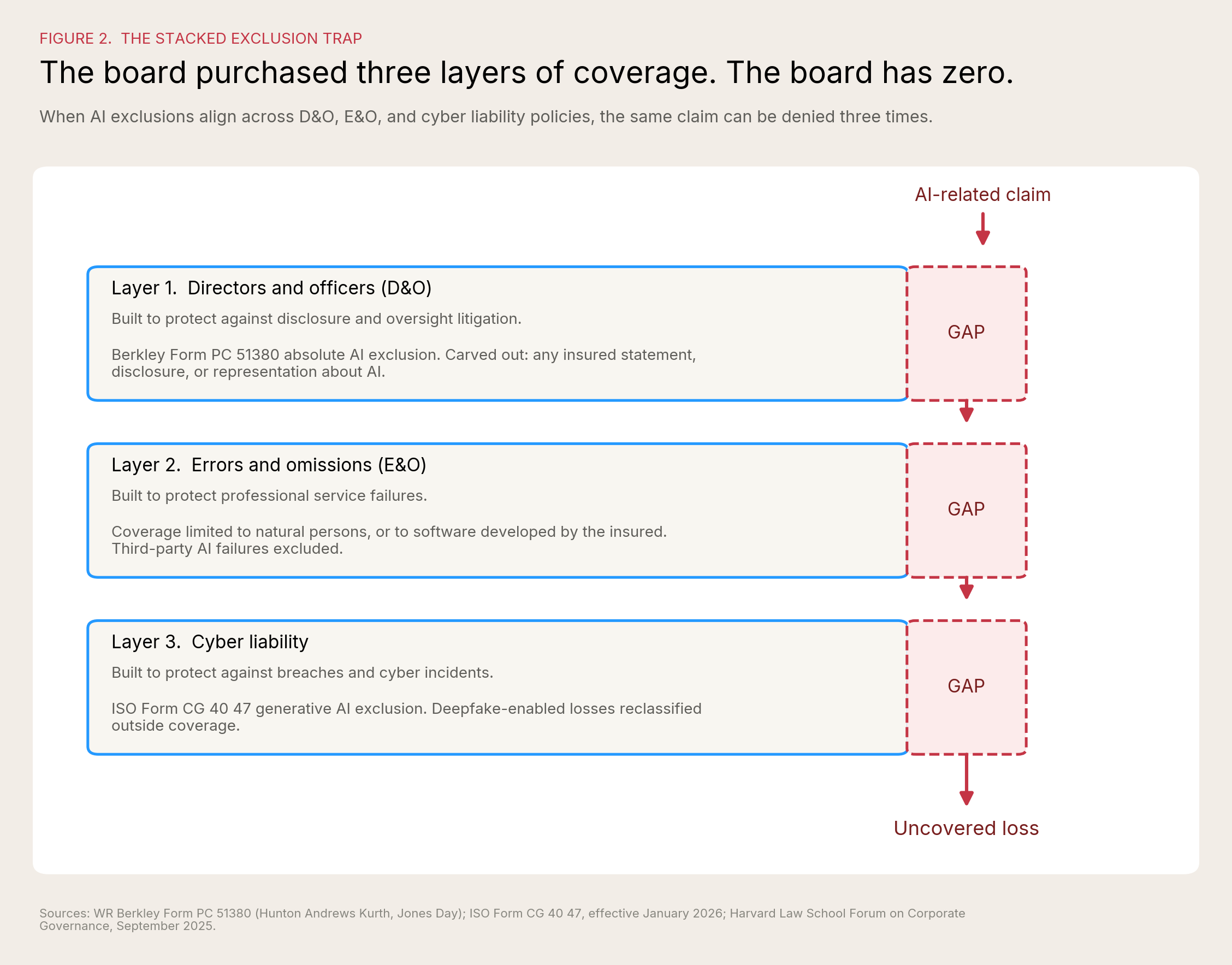

Most boards still believe they have three layers of coverage for an AI-related event. That belief is being tested in two distinct ways.

The stacked exclusion. Consider one concrete scenario practitioners now use to make the point. A company drafts SEC disclosures in Microsoft Word. Word uses embedded AI grammar and style suggestions. A securities suit follows an alleged misstatement. If the D&O policy includes an exclusion for "any claim arising from the use of generative AI," that claim could be excluded entirely. The exclusion does not require the AI to have caused the misstatement. It requires only that AI was used. As Hunton Andrews Kurth and GB&A Insurance documented in the Harvard Law School Forum, the operative language in current exclusions ("based upon, attributable to, arising out of, or related to, in whole, or in part") can apply even where AI played a negligible role and even when final decisions were human-made. The exclusion can extend to "any party, including but not limited to non-insured parties," which means vendor and third-party AI failures can flow back to the policyholder.

A policyholder with D&O, E&O, and cyber liability insurance can find all three policies declining the same AI-related claim. E&O may decline because the policy covers natural persons providing services, not artificial systems. D&O may decline via a new AI exclusion or via the standard professional services exclusion. Cyber may decline because AI was deployed in the security stack, or because the loss originated from a deepfake the carrier classifies as AI-enabled. The board thought it had three layers. The board may have fewer than it thinks.

The rescission risk. This is the threat practitioners discuss less often and the one with the larger downside. Insurance applications increasingly ask specific questions about AI usage: what percentage of revenue derives from generative AI, which systems incorporate AI capabilities, how AI is deployed across the organization. Those answers are point-in-time. AI usage during a 12-month policy period is not. As Michael Levine, partner at Hunton Andrews Kurth, has put it, when the number changes during the policy and the company experiences a loss, the carrier may argue the application misrepresented revenue, deny the claim, and rescind the policy. Rescission is not exclusion. Rescission means the policy never existed. Complete exposure with no coverage at all.

For the agentic enterprise, this is the structural problem. Agent populations grow weekly. Use cases multiply faster than annual renewal questionnaires can capture. The visibility problem compounds: for every AI tool a company knowingly purchases, employees are using one to two additional applications leadership has never heard of, and roughly two-thirds of employees use personal accounts for AI tools rather than the enterprise versions their companies have paid for. A board that signs an AI usage representation in April can have a meaningfully different AI footprint by June. Without a continuous-documentation discipline, every renewal cycle is a rescission risk waiting to be tested.

Two structural problems compound the coverage gap. Many D&O policies cover only "duly elected or appointed officers," which means a Chief AI Officer who is not formally recognized in bylaws may fall into a coverage gray zone exactly when most exposed. And the empirical analog for what an agentic AI failure does to shareholder value is already documented: an April 2026 ISS-STOXX study of 176 cyber incidents in the Russell 3000 found that affected companies underperformed the broader market by approximately 5% over three years. Cyber incidents are not isolated operational events. They are enterprise-level crises with lasting financial consequences. Underwriters expect agentic AI failures to follow the same pattern, which is why they are pricing for it now.

These are real risks. They are also manageable. Most can be negotiated, documented, or reframed before the next renewal.

The opportunity is now structurally measurable

The same pressure that creates exclusions creates differentiation for boards that govern well. As of 2026, that differentiation is empirical, not aspirational.

Grant Thornton's survey produced a clean performance separation by governance maturity. Organizations with fully integrated AI report revenue growth at 58%; organizations still piloting report 15%. Innovation acceleration is 59% versus 24%. Output quality is 64% versus 35%. Audit-readiness confidence is 74% versus 7%, a tenfold gap. The pattern is consistent: fully integrated organizations are not excelling in one area and lagging in others. They are outperforming across every measured dimension. The single largest cause of AI underperformance, cited by 46% of executives, is governance or compliance failure. The difference between leaders and laggards in 2026 is not access to better technology. It is governance built as a performance system.

This is the data point that turns the D&O conversation around. For a decade, governance has been framed as a cost center, a compliance overhead, a constraint on speed. The 2026 evidence shows the opposite. Boards that built governance infrastructure are now growing faster, innovating faster, and producing higher-quality output than boards that did not. They are also the boards that can pass an independent audit, which means they are the boards underwriters will write at preferred terms.

Three concrete advantages are already visible at renewal:

Better renewal economics. Aon, Marsh, and Woodruff Sawyer have all reported in 2025 and 2026 that boards bringing documented AI governance to renewal meetings are negotiating narrower exclusions, securing carve-backs, and in some cases obtaining affirmative AI coverage. This is the SOC 2 dynamic playing out in real time. The first companies to attest pay less and get cleaner terms.

Defensible decision-making record. The same documentation that satisfies an underwriter satisfies a plaintiff's deposition, a regulator's inquiry, and a proxy advisor's voting recommendation. Continuous oversight, mapped to existing committees, with named accountability, produces a single artifact priced into all four of the convergence mechanisms simultaneously. One investment, four pricing benefits.

Strategic optionality. Boards that have inventoried agents, tiered them by risk, and built named accountability are the boards that can deploy AI faster, not slower. Governance is acceleration when it removes uncertainty. The competitive advantage is not having the most agents. It is having the most agents the board can confidently approve.

The C-suite is not going to build this on its own. Inside Grant Thornton's data, COOs are nearly three times more concerned than CIOs and CTOs about regulatory and compliance uncertainty related to agentic AI. The two executives most directly accountable for agentic deployment cannot agree on the regulatory exposure. That disagreement is invisible to the company that does not surface it, and it is exactly what an underwriter probes for at renewal. A coherent risk posture is a board responsibility precisely because the C-suite cannot produce one without intervention.

Three things directors can do today

These are designed to fit inside a single afternoon, not a quarterly initiative.

1. Ask for the agent inventory at the next board meeting. Not next quarter. Next meeting. The question is short and the answer is diagnostic: how many AI agents does the company operate, what do they do, who deployed each one, and which committee owns oversight? If the answer takes longer than ten minutes to deliver, the board has just identified its first governance gap. Expect to discover that for every AI tool the company knowingly purchased, employees are using one to two more, and that two-thirds of usage runs through personal accounts rather than enterprise licenses. Better that surface in a board meeting than in a deposition or in a rescission letter from a carrier.

2. Ask the general counsel and broker to produce a one-page coverage map. D&O, E&O, cyber, EPLI, and CGL. For each, three questions: does the policy contain an AI exclusion or AI-related sublimit; if a stacked-exclusion event occurred tomorrow, which policy responds first; and what AI usage representations were made in the application, with documentation showing they remain current. Also: is the Chief AI Officer or AI lead recognized as an officer under bylaws and indemnification agreements? This is a sixty-minute exercise for an experienced broker. The output goes into the next risk committee packet.

3. Pressure-test the AI incident response playbook, and ask the COO and the CIO/CTO separately what they would do. Forty-eight percent of organizations have a documented AI incident playbook they have never tested. Twenty percent have a tested playbook with assigned owners. Direct management to run a tabletop exercise this quarter against a real scenario: a customer-facing agent makes thousands of bad decisions overnight, or a deepfake-enabled wire transfer clears, or a model drift event corrupts financial reporting. Then ask the COO and the CIO/CTO the same questions about regulatory exposure and incident classification, separately. If the answers differ materially, the board has surfaced the alignment gap before the underwriter does.

None of these require a vote. None require a new committee. None require capital. They require an afternoon and a willingness to ask the questions.

What D&O carriers will be doing for the next 12 months

A board that wants to plan for the next renewal cycle should expect five shifts.

The exclusion language will keep tightening. Berkley's absolute exclusion is the leading edge. Expect more carriers to follow with similar language. Expect AI definitions to broaden, not narrow, until they reach the limits of regulatory tolerance. Boards should assume that any 2026 renewal without active negotiation will come back with more AI-related restrictions than the prior year.

Capacity will segment by governance maturity. Companies that can document AI oversight will increasingly find themselves placed with carriers offering affirmative AI coverage and broader insured-person definitions. Companies that cannot will increasingly find themselves placed with carriers offering only excluded coverage. The split will become visible by mid-2026 renewals and pronounced by year-end.

Underwriting questionnaires will get longer and more specific. Generic AI questions are already being replaced with detailed inquiries about model testing cadence, vendor governance, board reporting frequency, and shadow AI controls. By Q4 2026, expect underwriters to request supporting documentation, not just questionnaire answers. Treat the renewal submission like a 10-K, not a survey.

Specialty AI liability products will mature. Munich Re, Armilla, AIUC, Corgi, Mayflower Specialty, Embroker, Chaucer, and Testudo are all writing AI-specific policies in 2026. Limits will rise. Pricing will compress as competition increases. Boards should expect standalone AI liability to become a normal supplement to traditional D&O for AI-heavy companies, the way standalone cyber became a normal supplement to commercial general liability after 2014.

The credentialing question will get answered. Cyber insurance underwriting normalized once SOC 2 became the recognized attestation standard. AI insurance underwriting will normalize once an equivalent standard emerges. Multiple frameworks are competing for that role: ISO/IEC 42001, NIST AI RMF, the EU AI Act conformity assessment, and independent rating standards. By the end of 2026, expect carriers to begin specifying which frameworks they recognize and which they do not. Boards already aligned to a recognized framework will be advantaged. Boards that are not will be re-doing the work.

Five questions every director should answer before renewal

If a director cannot answer all five with documentation in hand, the board is in the AI proof gap. The five are adapted from the Grant Thornton diagnostic and translated into the language of fiduciary oversight:

- Do the COO, the CIO/CTO, and the General Counsel share a common definition of AI success, AI risk, and AI accountability, on the record?

- Can the company consistently measure ROI across AI initiatives and identify which ones should scale, continue, or stop?

- Has the board defined where AI may act autonomously, where human oversight is required, and which named officer is accountable for outcomes in each tier?

- Can the company produce auditable evidence of how its material AI systems make decisions today, sufficient to satisfy a regulator, an underwriter, and a plaintiff in deposition?

- If an AI system failed tomorrow, does the board have a tested response plan and the ability to trace what went wrong, with named accountability, before the carrier and the regulator arrive?

A "no" on any of the five is a renewal-cycle risk. A "no" on three or more is a Caremark-cycle risk.

The bottom line

The agentic enterprise has changed what governance means. Governance is no longer a quarterly review of decisions a human took last week. It is continuous oversight of systems making decisions right now, across every employee touchpoint, every customer interaction, and every B2B transaction. Four markets, plaintiffs, regulators, underwriters, and investors, are now putting a price on it. Most boards have not yet adapted to it.

The boards that build continuous oversight, document it, map it to existing committees, and recognize the AI lead as an insured officer will pay less for D&O at renewal, attract better capacity, and build a defensible record for whatever comes next. The boards that delay will pay the gap, in premium, in capacity, in coverage, and eventually in claims.

D&O carriers are not adversaries in this story. They are the first commercial counterparty to take AI governance seriously, and they are going to be the loudest voice in the boardroom for the next twelve months. Plaintiffs, regulators, investors, and carriers are all converging on the same question: how does the board prove it governed an enterprise where the agents never stop?

The institution that owns the answer wins the category. The boards that have the answer get the better renewal.