It's almost the end of April. At four months into 2026, we're getting closer and closer to going vertical on the exponential intelligence curve.

There's so much going on, I wanted to take a moment to take stock and connect the dots.

In January, Dario Amodei , the CEO of Anthropic and one of the key leaders in AI, tried to wake the world up with a 20,000-word essay titled "The Adolescence of Technology." By April, that essay reads like an artifact of a slower era. Frontier model release cadence at Anthropic, OpenAI , and Google DeepMind has compressed from quarters to weeks.

Meanwhile, as AI developments advanced the Pentagon attempted to designate Anthropic a national-security supply chain risk, and U.S. District Judge Rita Lin called the designation Orwellian. While countries around the world realize they are mere spectators in the AI race, Canada and Germany jointly announced a $20 billion sovereign AI champion with the merger of Cohere and Aleph Alpha.

Slowly but surely, we're seeing the impact permeate the economy. The S&P 500 ended 2025 with 400,000 fewer employees than it began with, the first decline in eight years. And as enterprise fumble with how to deploy AI agents, DeepSeek AI V4 matched the U.S. closed-source frontier the same week OpenAI shipped GPT-5.5, at a fraction of the inference cost. On the GPT-5.5 launch call, OpenAI's chief scientist Jakub Pachocki said the past two years have been "surprisingly slow."

If this is "slow", no one is prepared for AI development to shift into high gear.

Three forces are converging at speeds the institutions designed to oversee them were not built to handle. The technology itself is compounding faster than any general-purpose technology in recorded history. The companies building it have shifted, almost without comment, from a commercial sector under light-touch oversight to a national-strategic capability that governments now treat the way they treated nuclear, semiconductor, and aerospace capabilities. And the public conversation that should be governing all of this has been captured by an existential-risk debate measured in p(doom), a question the people building the technology cannot agree on.

The truth is we are in uncharted territory. The United States and China are now in an AI Cold War, and the governments running both sides are organized around winning, not governing. The American federal posture, as the Pentagon-Anthropic dispute made plain, is that no private company should be permitted to set conditions on how the most powerful technology in human history is deployed by the state. The Chinese posture, as DeepSeek V4 made plain, is that the fastest way to undermine the trillions of dollars the United States has bet on closed-source frontier AI is to give the technology away for a fraction of the price. As "Big Tech" has transformed into "Big AI", every major lab now has a frontier release schedule measured in weeks, a national-security entanglement measured in classified contracts, and a workforce displacement story measured on the S&P 500 payroll line. They are all racing. None of them is governing.

Which leaves the boardroom.

For most of the past century, when a technology this consequential emerged, governance came from elsewhere. Antitrust agencies, sectoral regulators, the FDA, the FCC, the SEC, the ITU. Those institutions still exist. Most are not built to operate at the speed AI now moves. The regulatory frameworks closest to having something to say (the EU AI Act, California's SB 53, the UK AI Security Institute, the U.S. AI Action Plan) are either months from full enforcement, narrowly scoped to disclosure, or actively being relitigated by the same governments that authored them. The policy gap will not close in time. Not in 2026. Probably not in this decade.

The last institution that has both the legal duty and the corporate authority to impose fiduciary oversight on frontier AI is a company's board of directors. Not because boards are uniquely qualified. Because they are uniquely positioned. They sit between the operating company and the capital markets, the workforce and the regulators, the technology vendor and the customer. They have the legal duty and the institutional standing to ask the questions most leaders cannot. They are the last best hope for continuous governance of the agentic enterprise.

What follows is what happens when boards take that responsibility seriously, and what happens when they do not.

Raising The Alarm

On Monday, January 26, 2026, Dario Amodei published a 20,000-word essay titled "The Adolescence of Technology." His thesis: humanity is about to be handed power it does not yet possess the institutional maturity to wield, and we are meaningfully closer to real danger now than we were three years ago.

Dario should know as Anthropic has been at the forefront of driving AI innovation and adoption since it started in 2021. Anthropic's annualized revenue went from roughly $1 billion at the start of 2025 to $30 billion by April 2026, passing OpenAI and registering what observers have called the fastest enterprise software ramp on record. Eight of the Fortune 10 are now Claude customers, Claude Code crossed $1 billion in annualized revenue within six months of launch, and the Model Context Protocol, Anthropic's open standard for connecting AI agents to external systems, surpassed 97 million installs by March. Indeed, Dario is seeing this power evolve firsthand.

The essay argues that AI capable of outperforming Nobel laureates across most cognitive fields could arrive as early as 2027. Dario calls this scenario "a country of geniuses in a datacenter" and frames it as potentially the most serious national security threat in a century. He has separately put his personal probability of catastrophic, potentially civilization-ending outcomes from advanced AI at roughly 25 percent. Among the leading AI thought leaders, that figure sits toward the lower end of what is privately contemplated.

The essay was widely read in Silicon Valley, more quietly absorbed in policy circles, and barely discussed in any boardroom I spoke to. That gap, between what the people building this technology are publicly saying about its risks and what corporate boards are doing in response, is the core governance challenge of the AI era.

What’s Your P(doom)?

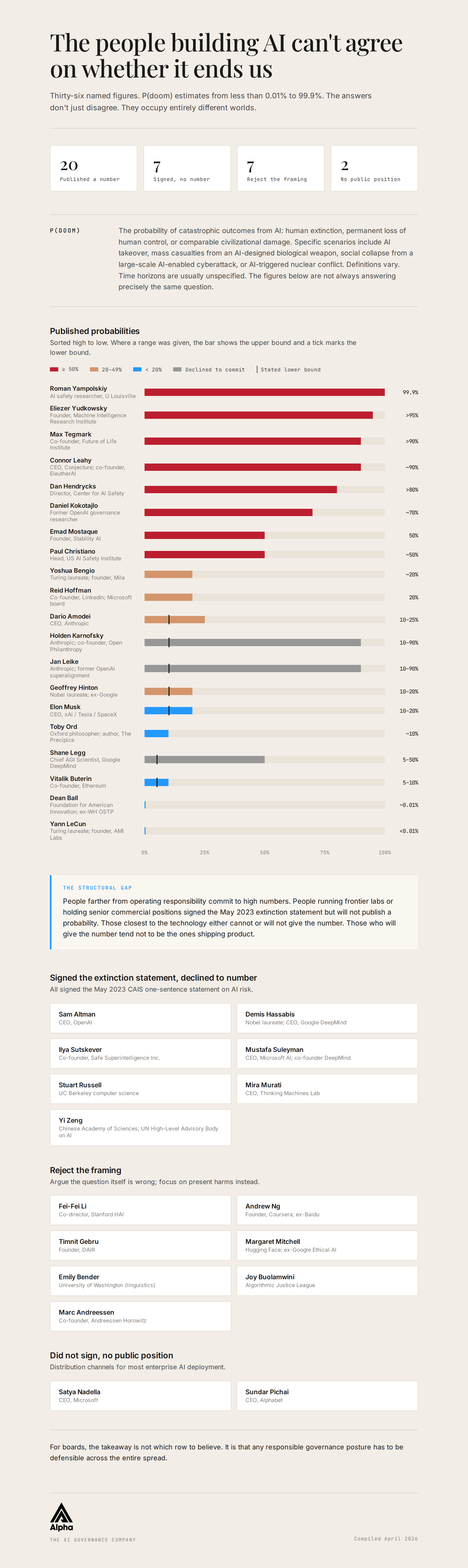

The shorthand the AI community uses for what Dario is describing is p(doom): the probability of doom. It originated in rationalist forums in the late 2000s, more than a decade before ChatGPT made it dinner-party material, as a compact way for forecasters to communicate uncertainty about catastrophic AI outcomes without writing a paper to do it.

The definition is loose by design, and the looseness is half the story. "Doom" usually means human extinction. Sometimes it means a broader category: the permanent and drastic curtailment of humanity's potential, the loss of meaningful human agency over civilization's direction, or a state of irreversible disempowerment in which humans survive but no longer determine their own future. The time horizon is often unspecified. Two people who both say "my p(doom) is twenty percent" may be answering meaningfully different questions.

That ambiguity is precisely why the academic community treats p(doom) less as a true probability and more as a piece of social data: a signal of expert sentiment rather than a calibrated forecast. The 2023 Expert Survey on Progress in AI, conducted by AI Impacts and led by Katja Grace, surveyed 2,778 AI researchers and found a mean estimate of 14.4 percent and a median of 5 percent for "extremely bad outcomes" from AI within the next century. The mean is pulled upward by a minority of respondents assigning very high probabilities. The median reflects a more typical view. The divergence itself is the signal: a non-trivial share of the field is assigning serious weight to catastrophic outcomes.

Strip away the academic framing and the message becomes stark. The people building these systems are publicly assigning a non-zero probability to outcomes as severe as civilizational collapse. In any other domain, a 5-to-14 percent risk, whether of a credit downgrade, a major recall, or a systemic breach, would trigger immediate board-level escalation.

The risk in question is not operational or financial. It is existential. The uncomfortable truth is not just that the average estimate is nearly three times the median. It is that any non-zero probability of "extremely bad outcomes" is enough to demand attention.

The Spread

The lowest credible figure on record comes from Yann LeCun, the Turing Award laureate who built the modern foundations of computer vision and until recently served as chief AI scientist at Meta. Yann places his p(doom) at less than 0.01 percent, comparing it to the probability of an asteroid impact. The highest comes from Roman Yampolskiy, an AI safety researcher at the University of Louisville. On Lex Fridman's podcast in June 2024, Roman put his p(doom) at 99.9 percent within this half-century, with particular focus on the 2026-to-2035 window.

Between those two endpoints sit the people whose decisions will determine, more than any policymaker, what enterprise AI looks like for the next decade.

What The AI Researchers Say

Geoffrey Hinton, whose decades of work on neural networks underpins much of modern AI, was awarded the 2024 Nobel Prize in Physics for foundational contributions to machine learning. He now publicly estimates roughly a 50-50 chance that AI will become more intelligent than humans within about twenty years, and a 10-to-20 percent chance that this could lead to loss of human control or even human extinction over the coming decades. By any normal standard of risk tolerance in corporate governance or public safety, a leading expert assigning double-digit odds to a civilization-level catastrophe is properly treated as an emergency.

Yoshua Bengio, who shared the 2018 Turing Award with Geoffrey Hinton and Yann LeCun for their foundational work on deep learning, has publicly placed the probability that advanced AI could lead to human-level catastrophe on the order of twenty percent. He often explains this as the product of two judgments: roughly even odds that we achieve artificial general intelligence within about a decade, and a substantial, greater-than-even chance that such systems, or the humans directing them, could end up using the technology against humanity at scale.

Sam Altman, CEO of OpenAI, has never publicly committed to a specific numerical probability for AI-driven human extinction. He is, however, a signatory to the May 2023 Center for AI Safety statement that says "mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war." In interviews he has emphasized his confidence that researchers and regulators can figure out how to avoid catastrophic outcomes. That expression of faith is not itself a probability estimate.

Demis Hassabis, the CEO of Google DeepMind, has said there is a "non-zero" chance that advanced AI leads to a catastrophic scenario and, like other lab heads, signed the May 2023 Center for AI Safety statement. At Davos in January 2026, he again placed artificial general intelligence on a roughly five-to-ten-year horizon, often framed as around a 50 percent chance of human-level AGI by 2030, while continuing to resist giving a specific numerical p(doom).

The people farther from operating decisions tend to give higher numbers.

Max Tegmark, MIT physicist and cofounder of the Future of Life Institute, has placed his p(doom) at over 90 percent absent meaningful regulation, citing MIT research he co-authored showing that the most popular proposed approach for controlling superintelligence, nested scalable oversight, fails 92 percent of the time even under optimistic assumptions. Daniel Kokotajlo, a former OpenAI governance researcher who resigned in 2024 after losing confidence in the company's safety trajectory, has publicly estimated around a 70 percent chance that advanced AI will destroy or catastrophically harm humanity. Dan Hendrycks, who directs the Center for AI Safety and advises xAI on safety, has said his p(doom) rose from roughly 20 percent to more than 80 percent over just two years as AI capabilities scaled and competitive pressure intensified. Jan Leike, who led OpenAI's superalignment team before resigning over safety-culture concerns and joining Anthropic, has deliberately kept his own estimate vague, saying only that it is "more than 10 percent, less than 90 percent." Paul Christiano, now leading research at the U.S. AI Safety Institute, has placed his overall probability that advanced AI leads to catastrophic outcomes at roughly 50 percent.

The skeptics give numbers as low as the doomers' are high.

Vitalik Buterin of Ethereum points to the 2022 survey of more than 4,000 machine learning researchers that estimated roughly a 5-to-10 percent chance that advanced AI could wipe out humanity, and treats that order of magnitude as a serious baseline. Elon Musk, whose xAI is building one of the largest training clusters in the world, has put his own p(doom) in public remarks at around 10 to 20 percent even as he continues to scale capital expenditure into frontier AI. Marc Andreessen of Andreessen Horowitz has not given a number and argues in his "Why AI Will Save the World" essay that those pushing for strict AI regulation on existential-risk grounds constitute an "AI risk cult," casting x-risk-motivated regulation as a kind of secular apocalypse cult.

The Silent Signatories

A third category matters as much as the doomers and the skeptics: senior figures who signed the May 2023 Center for AI Safety statement, formally placing AI extinction risk in the company of pandemics and nuclear war, but who have declined to publish a personal probability.

Ilya Sutskever, the OpenAI co-founder who left in 2024 to start Safe Superintelligence Inc., has spoken publicly about his concern that a misaligned superintelligence could permanently disempower humanity or even lead to human extinction, but has not committed to a specific numerical p(doom). Mustafa Suleyman, the DeepMind co-founder now serving as CEO of Microsoft AI, devoted his 2023 book The Coming Wave to arguing that artificial intelligence and synthetic biology together create civilization-scale risks, with plausible pathways to existential catastrophe, and has likewise not put a single number on that risk.

Stuart Russell, the UC Berkeley computer scientist whose 2019 book Human Compatible is widely treated as the standard academic text on the AI alignment and control problem, has consistently warned that advanced AI poses a serious existential risk but has not given a precise p(doom) estimate. Mira Murati, OpenAI's former CTO, founded Thinking Machines Lab in 2024 and in July 2025 closed an approximately $2 billion seed round at a $12 billion valuation, the largest seed financing in venture capital history, while publicly aligning herself with the view that AI risks are serious enough to warrant major investment in safety and control, but without naming a specific probability.

The pattern is not a coincidence. The closer a person sits to operational responsibility for a frontier AI system, or to the institutional credibility that depends on continuing to ship one, the less likely they are to commit to a public probability of catastrophic outcomes. This is not a moral failing. It is a structural fact about what it costs them to be wrong. A CEO who publishes 30 percent and is right has gained little. A CEO who publishes 30 percent and is wrong on the high side has invited the question of why they kept building. A CEO who publishes 5 percent and is wrong on the low side has invited a different question entirely, in a courtroom.

Their silence is itself telling.

Those Who Reject The Question

A taxonomy of doomers, skeptics, and decline-to-count signatories misses a fourth position that has become more visible as the technology has accelerated: researchers who argue that the focus on existential risk is itself a governance failure, because it crowds out attention to the harms AI is already causing.

The most prominent voice in this camp is Fei-Fei Li, the Stanford computer scientist who built ImageNet, the dataset that made modern deep learning possible. Fei-Fei is now co-director of the Stanford Institute for Human-Centered Artificial Intelligence (HAI) and founder of World Labs. She did not sign the May 2023 extinction statement. She has publicly described the focus on human-extinction risk as belonging to "the world of sci-fi." Her concern is not that catastrophic outcomes are impossible. It is that talking about them displaces attention from misinformation, labor disruption, bias in deployed systems, privacy erosion, and the increasing concentration of AI capability inside a small number of private companies.

Timnit Gebru, the former co-lead of Google's Ethical AI team and now founder of the The Distributed AI Research Institute (DAIR), makes a sharper version of the same argument. So do Margaret Mitchell at Hugging Face, Emily M. Bender at the University of Washington, and Dr. Joy Buolamwini at the Algorithmic Justice League. None has published a p(doom) figure. All argue, in different terms, that the doom framing distorts the policy conversation by making AI risk feel like a problem of the future when it is also, demonstrably, a problem of the present.

Andrew Ng, the founder of Coursera, founding lead of Google Brain, and former chief scientist at Baidu, makes a different version of the same critique. In 2024 he led a coalition of AI optimists opposing California's SB 1047 on the grounds that the existential-risk framing was being used to entrench incumbent labs at the expense of beneficial deployment. Both Fei-Fei and Andrew end up at the same critique of the doomers: not that they are wrong about the magnitude, but that they are wrong about which question to ask first.

These critiques deserve to be considered as surely both things can be true. Some people will focus on extinction risk. Others will focus on the more tangible model bias, privacy violations, and workforce displacement risks. Is there a right or wrong answer? Either way, the opportunities and the risks need to be discussed.

This Is Not A Drill

A standard revolver has six chambers. Load one with a live round, spin the cylinder, point the gun at your head, and pull the trigger. You have roughly a 16 percent chance of dying. No reasonable person plays this game.

That 16 percent figure sits below the public p(doom) estimates of Yoshua Bengio at 20 percent, Geoffrey Hinton at "kinda 50-50," and Dan Hendrycks at more than 80 percent. It sits inside the range Dario Amodei has given as his personal p(doom) at 10 to 25 percent. These numbers are not abstract. They are the probability of human extinction or comparable civilizational catastrophe. There is no second draw. A 1 percent chance of the species ending is not a figure any reasonable person should accept calmly. Ten percent should trigger emergency action across every government on earth. We are playing real-life Russian roulette and we do not even know it. Yet the people quoting these numbers are not screaming. They are continuing to build. The reason is not that they disbelieve their own estimates. It is that they believe the technology compounds.

The mechanism is what Ray Kurzweil named the Law of Accelerating Returns. Each generation of technology builds on the last faster than the last did, and at the limit, the curve goes vertical. Applied to artificial intelligence, the implication is recursive self-improvement: an AI system capable of meaningfully contributing to the design of the next generation of AI does not improve linearly. The data Epoch AI tracks already shows the early stages of this curve in motion. Training compute up roughly five times annually. Algorithmic efficiency up about three times per year. Inference costs halving every two months. Global compute capacity doubling every seven months. The next decade will not be a smooth extrapolation of the last.

That vertical move forces a question civilization has never had to answer. If intelligence itself becomes a commodity, what happens to labor? For most of recorded economic history, the scarcity of cognitive work has been the floor under wages. Take that floor out and the relationship between capital and labor decouples. Emad Mostaque, the founder of Stability AI and one of the few sitting AI-company founders with a published p(doom) of 50 percent, has framed the endgame in a sentence: the value of human labor could go negative. He means that in a world where AI performs cognitive work cheaper than humans can, humans become a cost on the balance sheet without an offsetting contribution. This seems inevitable right now. However, it is a possibility that depends entirely on choices governments and boards make in the next few years.

This is why p(doom) is not just dinner-party talk. When the people who have spent their careers building artificial intelligence tell you they believe there is a non-trivial chance their work ends humanity, the only responsible response is to take them at their word and act accordingly.

The Acceleration Is Observable

The clearest evidence that this debate matters now, not later, is what has happened in the four months since Dario's essay was published.

In June 2024, Leopold Aschenbrenner, a former OpenAI Superalignment researcher, published a 165-page essay titled "Situational Awareness: The Decade Ahead." Its central claim was that the trendlines in compute, algorithmic progress, and capability "unhobbling" pointed to AGI by 2027, an intelligence explosion within roughly a year of that, and superintelligence by 2030. The essay was widely treated at the time as a maximalist forecast at the edge of credibility. Over the past four months, the trendlines have moved closer to Leopold's, not farther.

Just look at the last four months. In mid January 2026, Anthropic released Claude Cowork, a desktop agent that brings agentic capabilities to non-technical knowledge workers. On February 5, Claude Opus 4.6 launched with a 14.5-hour task completion horizon, the longest ever measured, and added agent teams capable of splitting complex work across coordinating instances. On February 17, Sonnet 4.6 followed. On April 7, Anthropic previewed Claude Mythos, a frontier model whose cybersecurity capabilities the company judged too dangerous to release publicly. On April 16, Claude Opus 4.7 became generally available. On April 23, three days before this essay was written, OpenAI released GPT-5.5, six weeks after the launch of GPT-5.4. On the press call announcing GPT-5.5, Greg Brockman, OpenAI's president, called it "a real step forward towards more agentic and intuitive computing." And as a reminder, Jakub Pachocki, OpenAI's chief scientist, used a different framing on the same call: "I think the last two years have been surprisingly slow."

Slow.

The chief scientist of one of the three companies whose foundation models power the enterprise software stack of most of the Fortune 500 is publicly stating that the period during which ChatGPT crossed 900 million weekly active users and AI capability benchmarks moved up and to the right on essentially every axis was slower than the rate of progress he expects going forward.

We so are not ready for the exponential.

Ethan Mollick, the Wharton professor and author of Co-Intelligence, has framed the underlying question more cleanly than anyone. Every AI discussion ultimately rests on two questions: how good can AI get, and how fast? Everything else, from job impact to regulatory pressure to p(doom) itself, is downstream of the shape of that s-curve. The reason published p(doom) estimates span 99 points is not that some experts are smarter than others. It is that no one, including the people building the technology, can yet answer Ethan's two questions with confidence.

Yet, we do know that every benchmark on every leaderboard is moving in one direction: up and to the right. Every model release is closer in time to the one before it than the one before that. Every capability that was supposed to be five years out like agentic computing, autonomous coding, multi-step research, and professional-grade document creation has arrived in less than two. Leopold's intelligence explosion was supposed to be a phenomenon of the late 2020s. In the last four months, the curve has moved from theoretical to legible.

The next four months will not be slower.

They will be faster. Much faster.

The Disagreement Is the Signal

The natural human instinct, faced with a 99-point spread, is to argue about who is right. That instinct is wrong.

The gap reveals two things at once, and both are true. The skeptics are right that nobody has produced a rigorous, falsifiable model that justifies the high estimates with statistical confidence. The doomers are right that nobody has produced one that justifies the low estimates either. Both groups are pricing the same uncertainty. The disagreement is a function of priors rather than evidence, because no shared evidence exists. There is no historical reference class for what happens when a technology doubles in capability every few months.

When two equally credentialed groups of people looking at the same technology give estimates that differ by such a huge spread, the takeaway is that the technology is harder to forecast than either is willing to publicly admit. Translation: no one has a clue what is going to happen, but whatever it is, it will transform life as we know it.

In credit markets, when bond pricing diverges this widely, the underlying instrument is described as a Level 3 asset: no observable market price, valuation by model only, with the spread itself counted as a risk indicator. Frontier AI is the Level 3 asset of the global economy in 2026. The board's job is not to resolve the spread. It is to govern the company's exposure to it.

Why This Matters in Your Boardroom

Three facts deserve direct attention from every audit, risk, and full board meeting.

First, the CEOs of the three most important AI companies have all publicly acknowledged catastrophic risk. Sam Altman, Demis Hassabis, and Dario Amodei all signed the May 2023 statement. These are the same vendors providing the foundation models embedded inside the enterprise software stack your company already buys. When the CEO of your foundational AI vendor warns publicly, as Dario did in January, that the technology has crossed into more dangerous territory than it occupied three years ago, that statement crosses any reasonable threshold for materiality. It belongs in board minutes. Equally relevant is who has not signed. Satya Nadella at Microsoft (NASDAQ: MSFT) and Sundar Pichai at Alphabet (NASDAQ: GOOGL), the chief executives of the two companies through which most enterprise AI deployment now flows, did not put their names on the May 2023 statement and have not published personal p(doom) estimates. Their products are how the foundation models built by Anthropic, OpenAI, and Google DeepMind reach your employees. Their public neutrality on the catastrophic-risk question is a procurement-relevant data point. A board considering its AI vendor stack should note that the people running the largest distribution platforms have declined to take a public position on the magnitude of the risk their platforms now carry.

Second, your D&O underwriters are reading the same disagreement you are. Aon's "AI Risk 2026" guidance and counterpart materials from Marsh and Willis Towers Watson all link governance maturity to insurance terms. The actuarial layer of corporate America does not need to resolve the p(doom) debate. It needs to price the disagreement. It is already doing so. By your next D&O renewal, the question of whether your board has documented its AI governance posture will affect the premium you pay.

Third, the EU AI Act enforces in August 2026 with penalties of up to 7 percent of global revenue. The question regulators will ask is not "what is your p(doom)?" The question is "what evidence can you produce that your board considered the risks the technology providers themselves disclosed?"

The strongest boards I have seen this year are doing three concrete things.

- They are documenting their AI risk thesis in board minutes, not their p(doom) but their p(deployment risk): if a foundation model fails, hallucinates a material output, is recalled, or is sanctioned in a major jurisdiction, what is the company's exposure, and which committee owns it?

- They are treating safety, regulatory posture, and cybersecurity resilience as procurement criteria for any AI vendor, with the same rigor previously reserved for financial controls.

- They are mapping AI oversight to existing committee structures rather than creating standalone AI committees that lack charter authority. This is what Alpha calls Committee Accountability Mapping. AI risk is not a new category. It is an existing risk on a faster clock.

The End Of "Noses In, Fingers Out"

For a generation, the governing maxim of corporate boards has been "noses in, fingers out." Directors oversee. Management executes. The line held because management operated on human timescales: decisions were made by people, in meetings, with paper trails a board could review at the next quarterly session.

That premise is gone. When AI agents inside the enterprise stack take actions in milliseconds, signing contracts, routing capital, writing code, sending communications, interacting with customers, the question of whose fingers are on the work no longer has a clean answer. The board's noses are still in. The fingers now belong to systems no committee was designed to oversee. The gap between AI deployment velocity and governance infrastructure has a name: Governance Debt. It is compounding in every public company.

The leading edge of this transition is no longer theoretical. On March 31, 2026, Jack Dorsey and Sequoia Capital's Roelof Botha co-authored an essay titled "From Hierarchy to Intelligence," describing Block's deliberate restructuring from hierarchical organization to what they call an intelligent enterprise. The essay followed a February 2026 layoff of approximately 4,000 employees, roughly 40 percent of Block's workforce. Jack was explicit that the cuts were not a cost reduction but a permanent structural change. Block's Q4 2025 gross profit had grown 24 percent year over year. The reorganization was a thesis, not a retrenchment.

The argument is structural, and worth boards reading in full. For two thousand years, from the Roman contubernium to the Prussian general staff to the modern corporate matrix, organizations have used human hierarchy as an information-routing protocol. Span of control was the constraint: a manager can effectively oversee only a handful of people, so scale required layers, and layers slowed the flow of information. Jack and Roelof's bet is that AI is the first technology capable of performing the function those layers exist to provide.

Block is reorganizing around four components: capabilities (the atomic financial primitives like payments, lending, and card issuance), a continuously updated world model of its own operations and its customers, an intelligence layer that composes capabilities into solutions in real time, and the interfaces (Square, Cash App, Afterpay, TIDAL) through which those solutions are delivered. The human org chart compresses to three roles: individual contributors who build and operate the system, directly responsible individuals who own specific outcomes on 90-day cycles, and player-coaches who combine building with developing people. There is no permanent middle-management layer because the system performs the coordination function that layer used to provide.

Block is one company, early in this transition, and whether the model holds at full scale is an empirical question. Current Block employees told The Guardian that roughly 95 percent of AI-generated code changes still require human modification, and regulatory constraints in banking limit how much decision authority can be delegated to AI systems today. Both are fair caveats. What is not contested is the direction of travel. As Jack put it in conversation with Sequoia, every company will eventually need to confront the same question: what does your company understand that is genuinely hard to understand, and is that understanding compounding? If the answer is nothing, AI becomes a cost-cutting story that ends in commoditization. If the answer is meaningful, the company eventually reorganizes around the model.

That is the agentic enterprise.

It is what your company is on track to become whether or not your board has used the term.

The doctrine that replaces "noses in, fingers out" rests on a simple recognition. A board cannot review every action taken between meetings by autonomous systems running at machine speed. It can govern the design of those systems, the authorities granted to them, the guardrails and kill switches in place, and the disclosure regime that makes their behavior legible to the directors accountable for them. Govern the design, not the decisions. That is what continuous governance of the agentic enterprise requires of every board.

The Collision In Real Time

Two flashpoints, both inside the same window as the model releases described above, illustrate what the gap between governance cadence and technology cadence already looks like.

The first is the Pentagon-Anthropic dispute. On February 27, 2026, Defense Secretary Pete Hegseth, in conjunction with a presidential directive, designated Anthropic a "supply chain risk" to U.S. national security and ordered all federal agencies to cease using the company's technology. The dispute had a specific origin: Anthropic refused to remove contractual restrictions preventing Claude from being used for fully autonomous weapons or domestic mass surveillance. The Pentagon wanted unfettered access for what it called "all lawful purposes." The two sides could not agree, and the federal government took the unprecedented step of blacklisting one of the three most important AI companies in the world.

On March 26, U.S. District Judge Rita Lin issued a 43-page ruling indefinitely blocking the designation, calling it "Orwellian" and ruling it appeared to be First Amendment retaliation. A federal appeals court in Washington declined to grant Anthropic a similar stay on April 8 in a parallel proceeding. As of late April, Anthropic is litigating in two courts while continuing to ship products to enterprise customers, and the National Security Agency is reportedly running Claude on classified networks even as the Department of War seeks to bar Anthropic from federal contracting. The federal posture is not coherent. The dispute is not resolved. The technology has not paused.

The second is Project Glasswing. On April 7, Anthropic announced a preview release of Claude Mythos, a frontier model so capable at finding software vulnerabilities that the company refused to release it publicly. Anthropic distributed it instead to eleven named launch partners, including Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, alongside roughly forty additional organizations granted access for defensive security work. Within two weeks, Mozilla disclosed that Mythos had identified 271 vulnerabilities in a single test run against the Firefox browser, more than 12 times what Anthropic's prior model had found in the same codebase. In pre-release testing, Mythos identified a 27-year-old bug in OpenBSD, an operating system designed specifically to be hard to attack, and a 16-year-old flaw in FFmpeg that automated scanners had checked roughly five million times without flagging.

The dual-use problem is not theoretical. The UK AI Security Institute evaluated Mythos and confirmed it can execute autonomous multi-stage network attacks, completing a 32-step corporate-network attack simulation three out of ten times. The same model that helped Mozilla patch 271 vulnerabilities in two weeks can be turned, at the prompt level, into an offensive cybersecurity weapon. That is why Anthropic restricted access. On the same day Anthropic announced the program, an unauthorized group obtained access to Mythos through a third-party vendor environment.

What April 2026 looks like, in real terms, is this. A frontier model so powerful that its developer refuses to release it publicly. A federal government that cannot decide whether to ban that developer or use its technology on classified networks. Software vulnerabilities being discovered at twelve times the rate of three months ago. The most powerful AI security tool ever built, breached on day one. None of these stories was on the agenda of any board meeting in January. All of them are now governance-relevant in ways that periodic oversight cannot keep up with.

Where This Is Going

The Pentagon-Anthropic dispute is not an isolated event. It is the leading edge of a transition the AI industry has been quietly anticipating for at least two years: the moment when frontier AI moves from being a commercial sector under light-touch oversight to being a strategic national-security asset, the way governments treated nuclear, semiconductor, and aerospace capabilities in the second half of the twentieth century.

Leopold's "Situational Awareness" predicted that by 2027 or 2028 the United States government would launch "some form of government AGI project." That timeline now looks conservative. The Pentagon's effort to designate Anthropic a supply chain risk was, structurally, the first attempt to apply national-security tooling to a frontier AI lab. The legal infrastructure it tested, the Federal Acquisition Supply Chain Security Act, Section 3252 of Title 10, and the threatened invocation of the Defense Production Act, will be tested again. The dispute is paused in court. The underlying tension between commercial AI sovereignty and national-security access is unresolved and structural.

Dean Ball, who served as senior policy advisor for AI at the White House Office of Science and Technology Policy in 2025 and was the primary drafter of the Trump administration's AI Action Plan, now writes from the Foundation for American Innovation. His position is instructive precisely because he is skeptical of the existential-risk framing as a basis for regulation. Dean's argument is that the more important policy question is whether American AI capability will outpace foreign competition, and that the policy frame is now structured around three pillars: innovation, infrastructure, and international security. The third pillar treats frontier AI as a sovereign concern equal in weight to the first two. The people drafting U.S. AI policy do not see frontier AI as a commercial product. They see it as a national-strategic capability that happens, for now, to live inside private companies.

The same posture is converging globally. The United Kingdom's AI Safety Institute renamed itself the AI Security Institute in 2025 to reflect a shifted mandate. The European Union's AI Act, fully enforceable in August 2026, is a sovereignty regulation as much as a safety regulation. The People's Republic of China has operated AI as a state-strategic asset since its 2017 New Generation Artificial Intelligence Development Plan. Saudi Arabia, the United Arab Emirates, and India have each announced sovereign AI initiatives in the past eighteen months.

The era of frontier AI as a purely commercial market is closing.

So here are some predictions to consider (fully acknowledging the inherent risk of making predictions).

- Within 24 months, some form of federal disclosure or licensing regime will exist for frontier AI development in the United States. It will likely emerge not from new legislation but from existing authorities applied in new ways: FASCSA-style designations, export controls on model weights as well as chips, classified-network requirements, mandatory pre-deployment evaluations, or some combination. Boards whose companies depend on frontier AI vendors will want to know which authorities apply to which vendor stack, and which scenarios trigger them.

- Within the same window, the line between commercial AI and national-security AI will become operationally blurry in ways the current corporate governance regime does not anticipate. The Anthropic case already shows what this looks like in practice: a federal blacklist on one front while NSA runs the same company's most sensitive model on classified networks. Boards will need to understand not only which AI models their employees are using, but which versions of those models exist behind which government barriers, and what compliance regimes apply when a model crosses jurisdictions.

- Sovereign AI will become a board-level procurement question, not just a regulatory one. Companies that buy and deploy AI at scale will increasingly be asked, in 10-K disclosures and proxy statements, to justify the jurisdictions of the vendors they selected. European subsidiaries will face pressure to use European-developed AI. Chinese subsidiaries will face restrictions on American-developed AI. American multinationals will navigate three or four overlapping regulatory regimes simultaneously. The era of choosing AI vendors purely on capability and price is ending.

- Within 36 months, at least one frontier AI laboratory in the United States will operate under some form of permanent federal compulsion: mandatory licensing, classified-track development obligations, government-required board observers, or full or partial nationalization in the event of a national-security crisis or successful adversary cyber operation against the lab itself. The Pentagon-Anthropic case will look, in retrospect, like the precondition. Boards should plan for a world in which their AI vendors operate under federal constraints their other vendors do not.

What Governance Must Become

For most of the past century, corporate governance has operated on a periodic rhythm. Quarterly board packs. Annual audits. Weekly status reports. Each mechanism assumed the underlying business moved slowly enough that a periodic snapshot could meaningfully capture its risk profile. That premise no longer holds.

When AI agents inside the enterprise stack make decisions in milliseconds, when foundation models ship on a near-weekly cadence, when the AI development curve begins to go vertical, the gap between board oversight and operational reality is no longer measured in weeks. It is measured in orders of magnitude. By the time a quarterly board pack reaches directors, the AI environment it describes has already changed.

The governance answer is not faster packs or more frequent meetings. It is a different posture entirely: continuous governance of the agentic enterprise. The same way frontier AI now runs continuously, governance has to run continuously. The same way agents make decisions in milliseconds, governance frameworks have to detect, classify, and respond to those decisions in something closer to real time. The same way capability scales exponentially, governance maturity has to compound rather than recalibrate at quarterly intervals.

Continuous governance includes AI Safety and Robustness, Governance Infrastructure, Cybersecurity and AI Resilience, and Third-Party and Supply Chain Governance. It is what Committee Accountability Mapping operationalizes: every AI risk routed to the existing committee that already owns the analogous non-AI risk. It is what Governance Debt names: the gap between AI deployment velocity and governance infrastructure that, left unaddressed, becomes the next decade's primary source of director liability.

That is what p(doom) is really asking us to take seriously. Not the abstract question of whether the species survives. The concrete question of whether the institutions we have built to oversee technology can keep pace with the technology they are trying to oversee. The estimates by all of the AI leaders are signals of how seriously the underlying engineering question is being treated by the people closest to it. The answer for boards, for institutional investors, for D&O underwriters, for the people who actually allocate capital in this economy, is to build governance that runs at the speed of the systems it governs.

Your AI agents acted ten thousand times since your last board meeting. Your governance framework hasn't kept up with those decisions. That gap, between the speed of AI and the cadence of oversight, is the governance challenge of the next decade. Closing it is not optional.

What To Do Monday Morning

Before your next board meeting, ask management for one document: the company's AI vendor inventory, mapped to the contractual terms governing each model's use, the jurisdictions in which each vendor is regulated, and the committee that owns oversight of each. If your team cannot produce that document in 48 hours, the document itself is the meeting agenda.

The companies that build continuous governance of the agentic enterprise will be the ones that survive what comes next.