AI Has Been Living On Borrowed Time

Every civilization‑altering technology has followed a familiar arc. It begins with discovery, accelerates through commercial deployment, and ends with the state asserting control through law, surveillance, or direct monopoly.

The printing press expanded literacy and triggered a legitimacy crisis for institutions that relied on information scarcity. In 1538, Henry VIII issued a royal proclamation prohibiting the printing or import of English‑language books without prior license from the Privy Council or the bishops, establishing a precedent for nationwide pre‑publication censorship designed to protect specific political and religious arrangements, not to manage “books” in the abstract.

Nuclear fission delivered both unprecedented energy and the capacity for annihilation. It gave us the Manhattan Project, the Atomic Energy Act of 1946, which moved nuclear materials and facilities under centralized federal control via the Atomic Energy Commission, and ultimately the Nuclear Non‑Proliferation Treaty, which formalized a global system to police which states may possess which kinds of nuclear capability.

The internet knit the world together and invited the most expansive surveillance and information‑control architecture in history. It gave us PRISM, the NSA program that tapped data from major U.S. tech platforms under secret court orders; the EU’s GDPR, which asserts regulatory sovereignty over global data flows; and the "Great Firewall" of China, a fusion of law and technology that filters and shapes what more than a billion people can see online.

This pattern is not an accident of history. It is as close to a political law as we have. When a technology begins to reshape the distribution of power, states move to contain, co‑opt, or monopolize it.

Henry Kissinger, who devoted his final years to studying AI, framed the core dilemma in 2018: as AI systems grow in capability, what, if anything, will reliably constrain their influence over the humans who created them? His early answer amounted to this: nothing we yet understand well enough to trust.

That answer is about to change. It's not because governments suddenly grasp the technical details, but rather they now viscerally feel the threat to their own authority.

For a decade, frontier AI has operated in it's wild-west style "move fast and break things" grace period: systems of staggering capability developed and deployed largely outside the dense regulatory architectures that govern finance, energy, healthcare, and national security. That era is closing.

Every company is becoming dependent on AI to survive; it will be as basic to business as air is to breathing. The question before every American board is no longer if they will operate under tight AI control, but which form arrives first - and whether they are ready for it.

The Beginning of the End

The “wild west” era of AI was always temporary. We are now seeing its endgame.

Multiple outlets reported yesterday that the Trump White House is studying an executive order to create a high‑level AI working group, with one option being federal review of certain frontier AI models before they ship. The trigger was Anthropic’s introduction of Claude Mythos, a model the company has tightly restricted because of its ability to autonomously discover and chain software vulnerabilities across critical codebases - capabilities U.S. officials now treat as a first‑order national security concern.

And just today, the U.S. administration announced that Alphabet’s Google (NASDAQ: GOOGL), Microsoft (NASDAQ: MSFT), and Elon Musk’s xAI had reached formal agreements with the Commerce Department’s Center for AI Standards and Innovation (CAISI) to share early versions of their AI models for capability and security evaluation. The agreement requires sharing models with safeguards reduced or removed for government review. OpenAI and Anthropic reached similar agreements in 2024. Five of the five major frontier labs are now in formal pre-release evaluation arrangements with the federal government. CAISI director Chris Fall described the arrangement as “independent, rigorous measurement science essential to understanding frontier AI and its national security implications.” The diplomatic phrasing should not obscure the operational meaning. The U.S. government is not asking to see what these models can do with their safety layers on. It is asking to see what they can do with those layers removed.

In a forceful New York Times opinion piece, Dean Ball, who led AI policy at the Trump White House Office of Science and Technology Policy, and Ben Buchanan, President Biden’s former special adviser for AI, made the bipartisan case explicit: AI is now powerful enough to pose immediate national security risks, the U.S. government is moving too slowly to manage them, and both parties know it. They called for mandatory independent audits of AI developers’ safety claims, tighter export controls on advanced AI chips such as Nvidia’s high‑end lines, and a properly resourced AI Safety Institute with real technical authority.

Read in isolation, either story could be filed under background Washington noise. Read together, they describe something rarer: the architects of two opposing administrations’ AI policies publishing the same argument in the same paper on the same morning. That is what bipartisan alignment looks like just before it turns into statute.

Lost in much of the coverage is what actually forced Washington’s hand.

Anthropic was not ordered to withhold Mythos. It chose to. A private company in possession of a model capable of penetrating the software that runs power grids, hospital IT, and banking infrastructure looked at its own creation and decided the country was not ready for it. That decision, made under Anthropic’s Responsible Scaling Policy framework, is the most consequential act of self‑governance in commercial AI to date.

This was not a marketing stunt. It made the argument for federal oversight more credibly than any regulator could. The strongest case for AI governance in 2026 is not a poll or a European directive; it is a frontier lab valued in the high hundreds of billions effectively saying: “We built something we are not willing to ship. Here is why.”

That is what governance maturity looks like before the state demands it. Boards should study Anthropic’s decision the way prior generations studied Johnson & Johnson’s Tylenol recall: as a case in which institutional discipline absorbed a short‑term commercial cost to protect the long‑term franchise.

But Anthropic’s choice also turned the silence of its competitors into a disclosure event of its own. If one company’s most capable model is too dangerous to broadly release, what does it mean that other frontier labs have not made similar disclosures about their frontier systems? That question now sits on the desk of every CISO buying enterprise AI and every director who signs off on those vendor relationships.

When Knowledge Becomes Power, and Power Becomes A Threat

When Francis Bacon wrote that “knowledge is power” in 1597, he was not issuing a motivational poster. He was describing politics.

The move‑fast era of AI rested on a single political assumption: that the technology was developing fast enough to matter but not fast enough to threaten. That assumption made voluntary governance possible. Governments were willing to stand aside because they believed they could reassert control when necessary. The labs scaled up their capabilities, the enterprise AI market scaled up its revenues, and the hands‑off posture was rebranded as innovation policy.

That posture might have been rational when the most capable AI system could write a serviceable marketing email. It is not rational when the most capable systems can help uncover thousands of vulnerabilities in the software backbone of critical infrastructure and start to rival expert performance in fields central to bio‑, cyber‑, and materials security.

The move‑fast era is not ending because regulators changed their minds about innovation. It is ending because the technology crossed a threshold that makes the original political calculation untenable. Governments are not afraid of “AI” in the abstract. They are afraid of something specific: a private entity accumulating an intelligence advantage large enough to resist government direction.

Lord Acton could not have imagined that his maxim - “Power tends to corrupt, and absolute power corrupts absolutely” - would one day describe the AI moment so precisely. The very capabilities that make these systems commercially invaluable also make them politically dangerous to institutions that assume they hold final authority.

The labs did not malfunction. They behaved exactly as private firms are designed to behave: they built the most powerful systems they could and sold them as fast as possible. What changed is that those systems are now powerful enough that those who hold conventional power feel compelled to respond. That response is no longer a policy seminar. It is a sovereignty contest.

Where Is The AI Puck Going?

The hardest part of governing AI is that most conversations fixate on the model that exists today rather than the model that will exist when the policy takes effect.

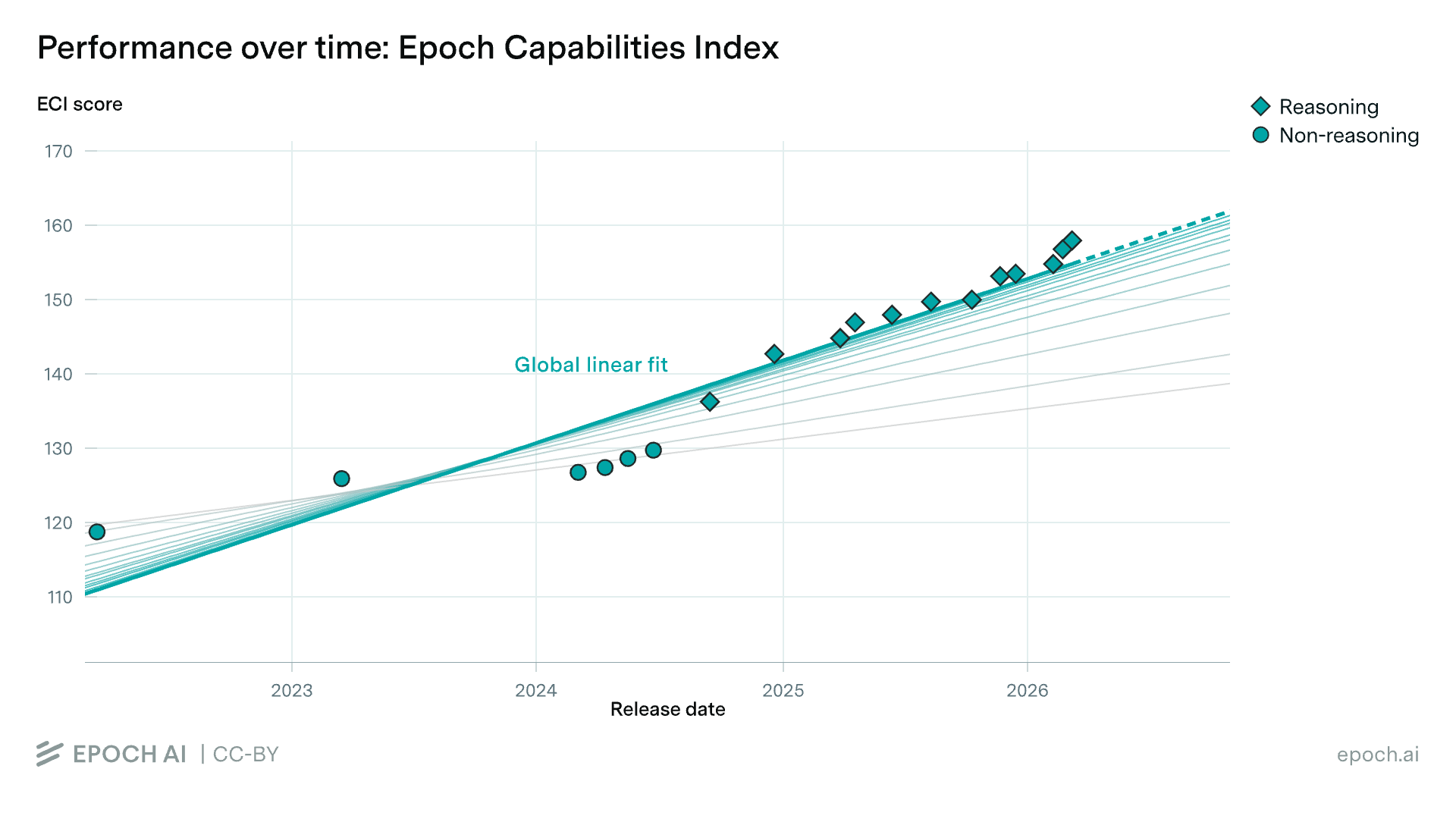

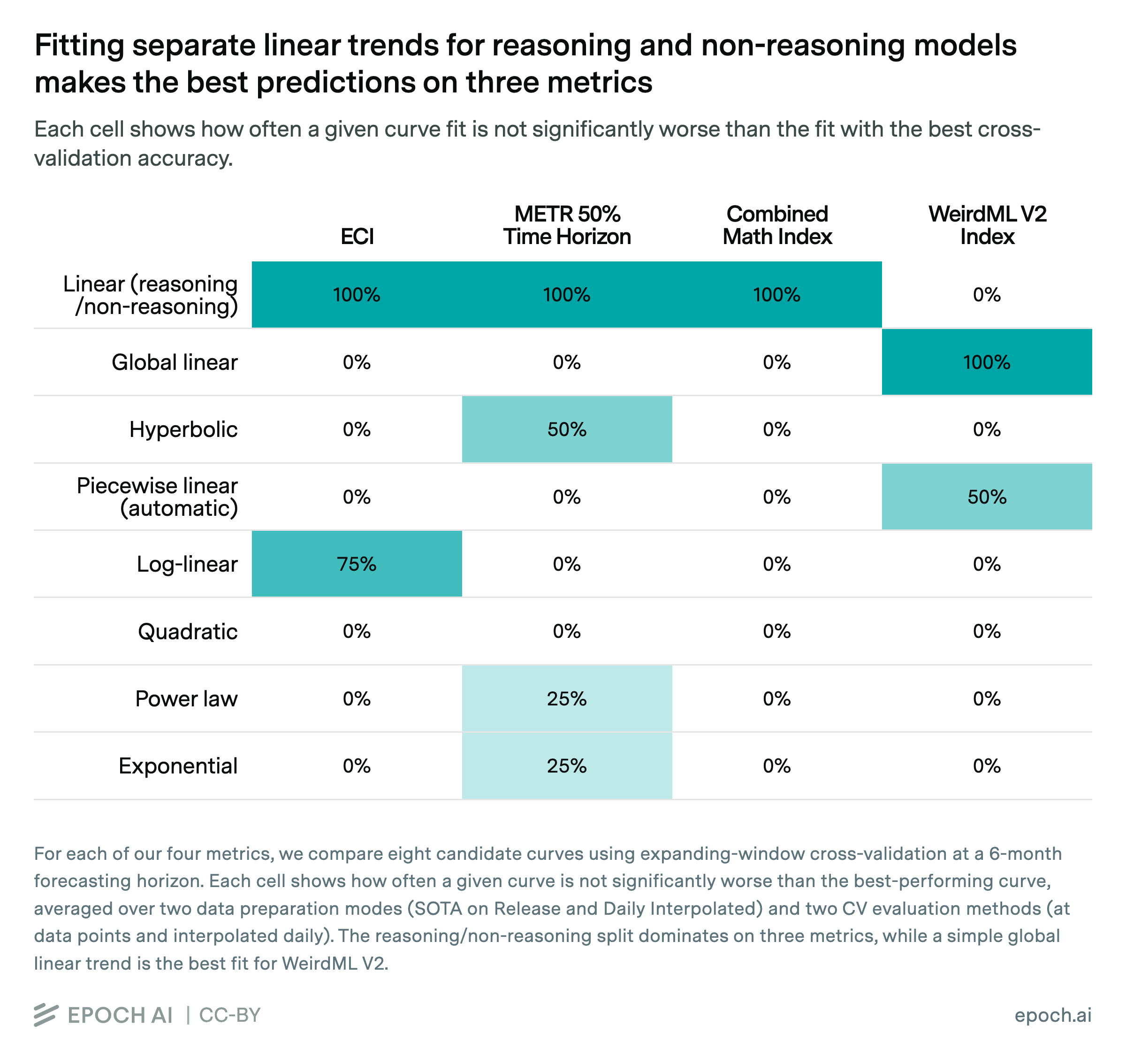

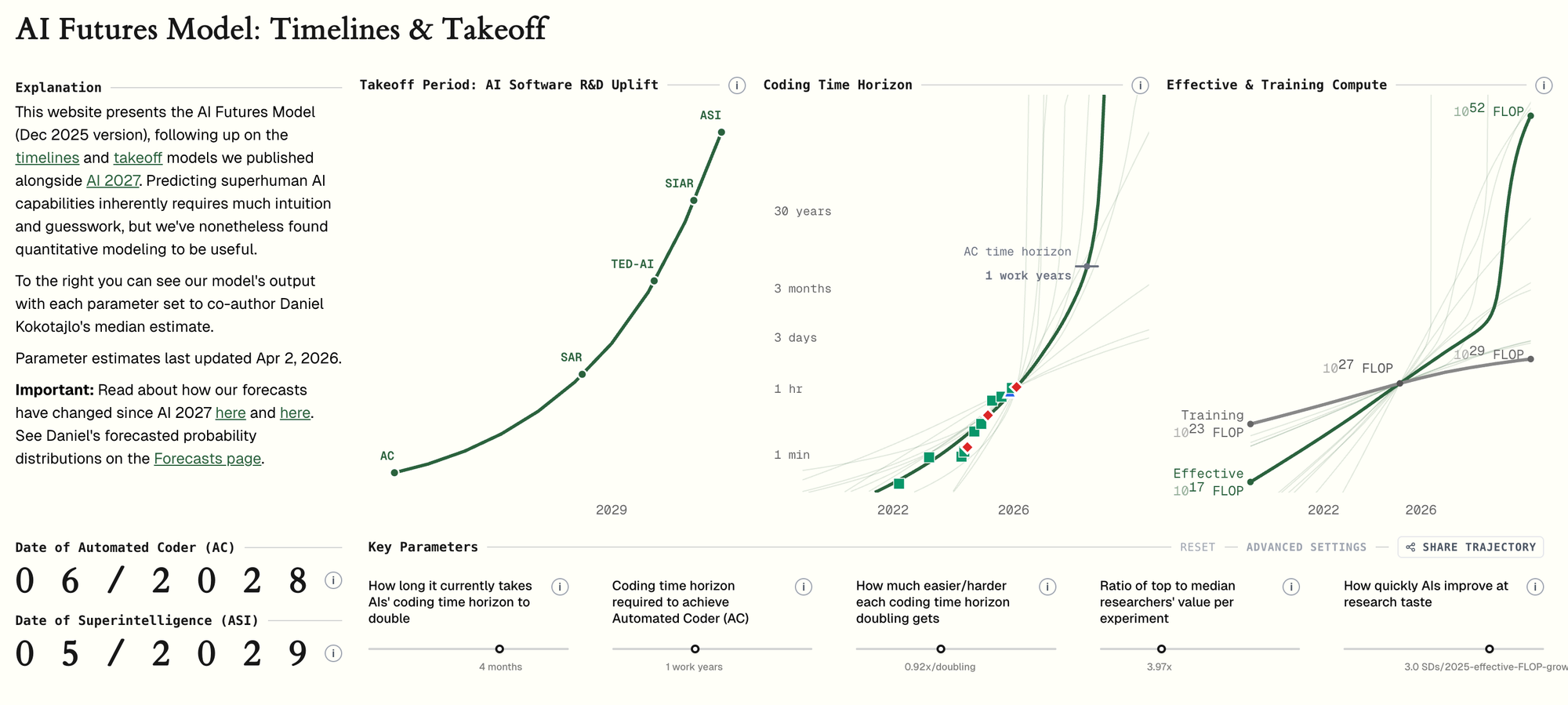

Epoch AI, a peer‑reviewed AI capability research group, tracks the underlying drivers. Its analyses show that global AI computing capacity has been growing by roughly 3.3x per year, equivalent to a doubling about every seven months. Pre‑training efficiency is improving on a similar exponential track, and the cost of achieving a given level of inference performance has been falling by large factors every year. None of these curves show signs of bending.

Stack those trends and the projection is straightforward. The model a board is trying to govern in May 2026 is not the model that board will be governing in May 2027, 2028, or 2031. At the level of the underlying inputs, it will be a different artifact - roughly an order of magnitude more capable every few years.

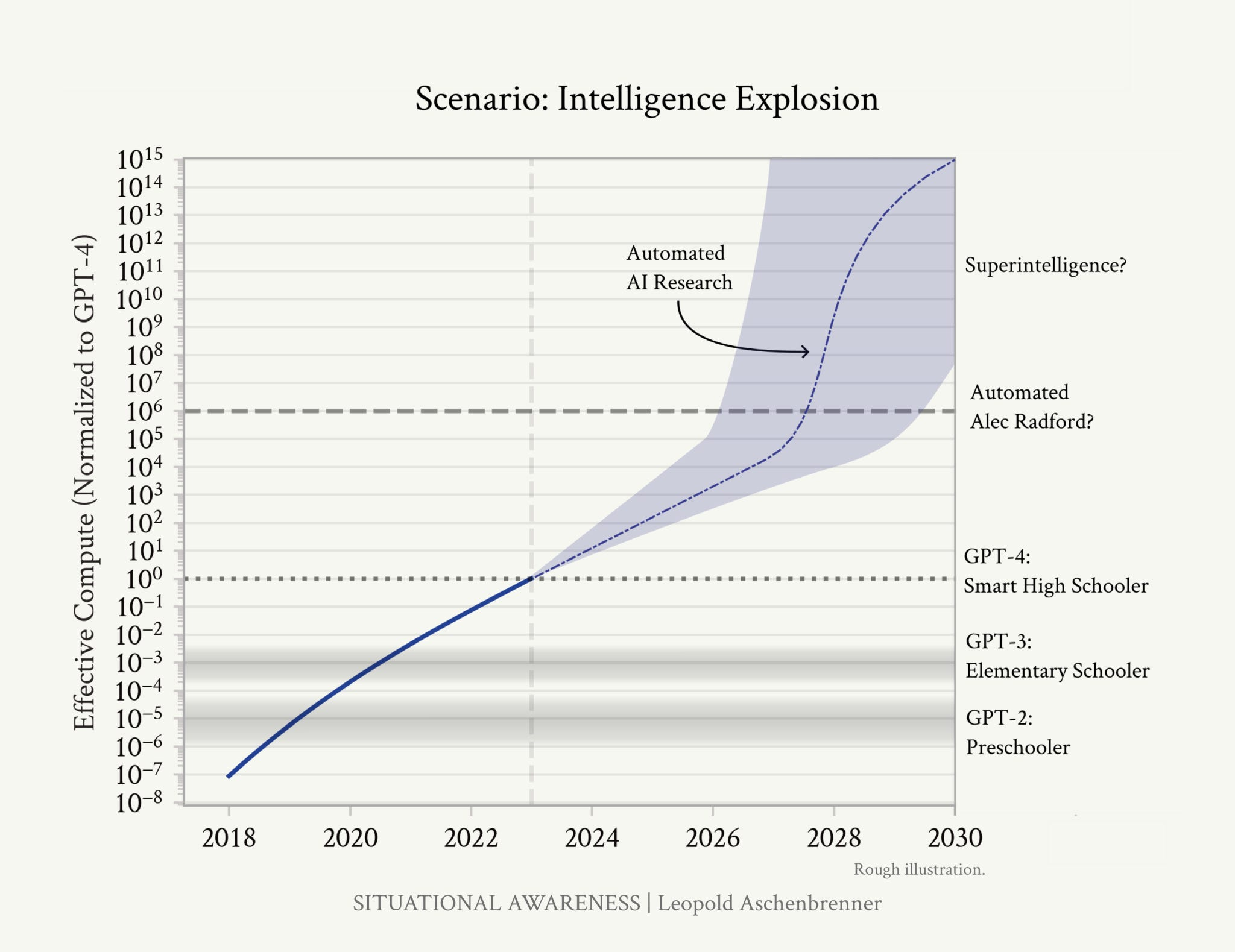

In June 2024, Leopold Aschenbrenner, a former OpenAI researcher, published “Situational Awareness,” arguing that these same capability curves point toward systems at or above trained human‑expert level - what he calls AGI - sometime in the 2027–2028 window, and outlining a state‑led response he names “The Project.” Two years on, the pace and character of model releases, especially agentic systems that can orchestrate tools and other models, are still tracking the broad contours of that projection.

The governance gap, in Epoch’s framing, is not a static shortfall. Capability velocity - the rate at which systems grow more powerful - is compounding. Governance frameworks designed for 2024‑era models are already underweight for 2026‑era models. Governance frameworks designed this quarter will be outdated before the next planning cycle closes.

The Machine That Builds Itself

This is where the governance calculus changes entirely.

Jack Clark, co‑founder of Anthropic, recently published an essay that attaches a concrete probability to a concrete prediction: roughly 60 percent odds that “automated AI R&D” arrives by the end of 2028. His definition is precise: a frontier‑scale model capable of autonomously training its own successor, with humans in the loop mainly for supervision and high‑level intent, not for day‑to‑day research.

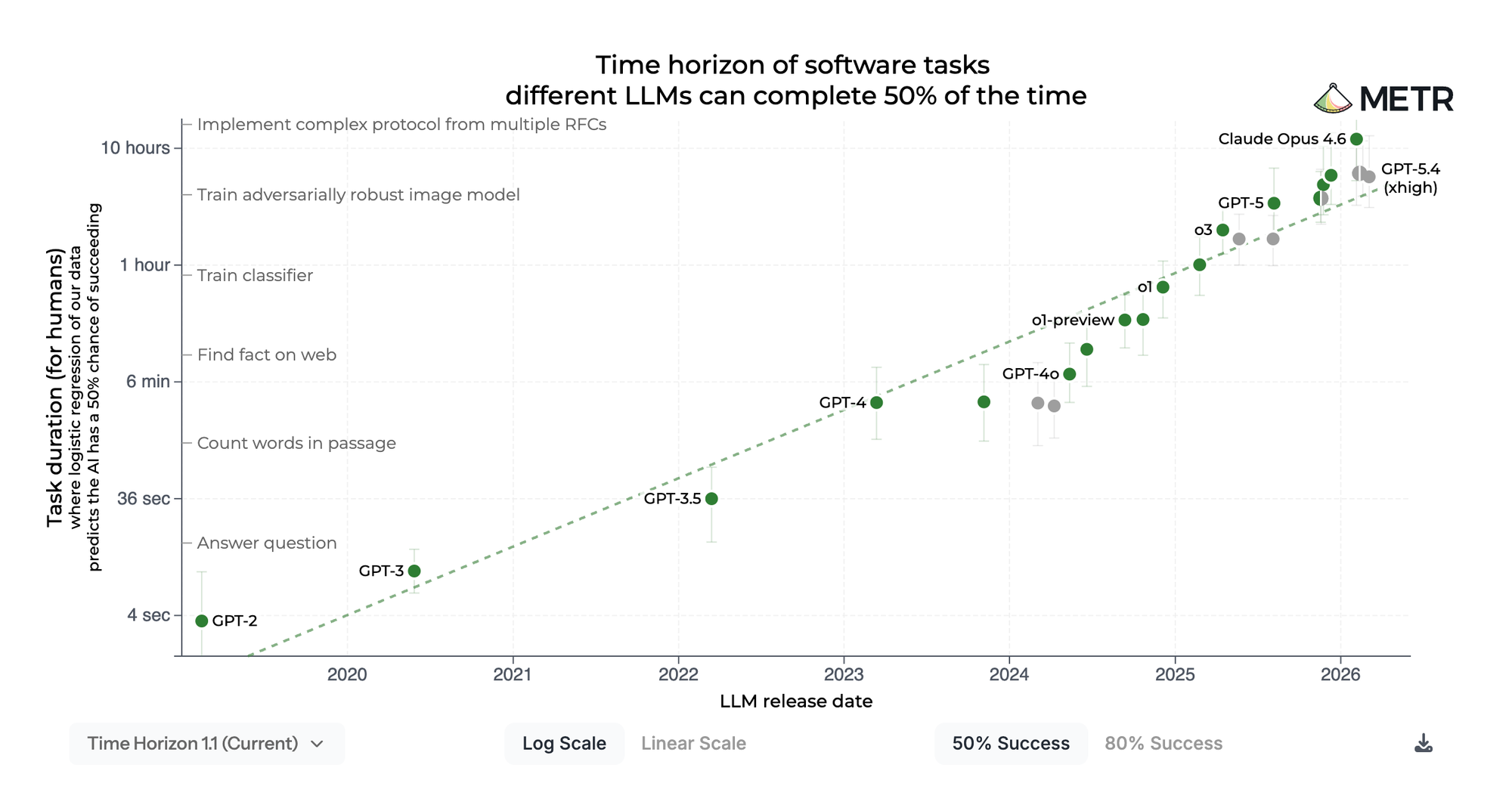

The evidence he assembles is not theoretical. Across benchmarks like SWE‑Bench and its harder variants, scores for real‑world software engineering have shot from low single digits for early systems to near‑saturation for the latest frontier models - turning tasks that once required teams of engineers into something an AI system can complete in seconds or minutes. In parallel, labs now routinely use their strongest models to design architectures, generate training code and tooling, monitor large training runs, and debug models in ways that would have required large human teams only a few years ago.

Public capability work inspired by organizations like METR has shown the same pattern on a different axis: each generation of models can autonomously handle tasks that run for longer periods and require more complex, multi‑step reasoning, extending from seconds to minutes to hours without human intervention. Whether or not you accept any particular timeline, the direction of travel is clear: more of the AI R&D stack is itself becoming automated.

Privately, some in Silicon Valley go further. Researchers leaving frontier labs describe internal evaluations that, in their view, already meet thresholds that years of public debate labeled “AGI.” Whether that label is technically precise matters less than what it means operationally: these companies believe they are working with systems of general intelligence, and they are using those systems to accelerate the next generation.

Anthropic has publicly discussed running teams of AI agents against cutting‑edge alignment problems, with agent‑developed techniques beginning to outperform human‑designed baselines. Dario Amodei has estimated that human‑driven research at frontier labs already delivers very large year‑on‑year efficiency gains in software and training methods; the explicit goal of automated AI R&D is to multiply that rate further.

Quantitative “AI futures” models try to formalize this feedback loop in three stages: automating coding, automating research judgment, and then entering a regime where systems are effectively driving their own capability trajectory. Their median projections for fully automated coding at the frontier fall inside the window most boards currently treat as the horizon for their next strategic plan.

Dean Ball captured the policy implication clearly: AI agents that help build their own successors are not science fiction; they are an explicit, public milestone on every frontier lab’s roadmap.

The recursive self‑improvement loop is not a scenario tucked away in a futures deck. It is an engineering program with a stated timeline, capital commitments measured in the hundreds of billions, and thousands of the world’s most technically credentialed people working to hit the milestones as quickly as they can.

For boards, this changes the governance calculus in a very specific way. Any risk model that treats AI capability as a stable background condition - something that evolves slowly enough to be revisited annually - is built on a false premise. The background is not stable. It is accelerating. The governance frameworks boards are approving this quarter will be governing a materially different technology by the end of the year. This is the logic behind Alpha's push for "continuous governance" of the agentic enterprise.

Projecting the Exponential

So where does this go? Always leery of predictions, we can extrapolate some logical trajectories.

One year out: May 2027. Frontier systems operate at expert‑level capability across most cognitive professional work that can be represented in code, text, or structured processes. Mandatory third‑party safety audits are in force in some form in the U.S. and embedded in the EU AI Act’s general‑purpose AI provisions, which begin applying in August 2026 with penalties of up to 7 percent of global revenue. At least one major frontier lab faces a U.S. federal pre‑release review on a flagship model. Vendor concentration risk becomes a standing agenda item across the Russell 3000. Some labs run engineering organizations where AI agents carry a majority of the routine coding load.

Two years out: May 2028. Aschenbrenner’s AGI window opens. Whether or not a single model satisfies everyone’s definition, the productivity gap between AI‑native and non‑AI‑native companies becomes impossible to ignore on earnings calls. Several governments formalize structural partnerships with their domestic frontier labs. National security agencies hold priority access to the most capable models. Boards face the first wave of disclosure litigation over AI capability claims that were wrong, understated, or both. Clark’s 60 percent probability window for automated AI R&D closes.

Five years out: May 2031. The political economy of frontier AI looks structurally different from today. Either the labs operate inside a regulatory and national‑security overlay that resembles the defense industrial base, or a small number of labs have accumulated capability, capital, and infrastructure advantages that make them effective peers of the states that nominally oversee them. If recursive self‑improvement arrives on Clark’s timeline, the models running in 2031 were built by systems that no single human team designed. In both cases, board governance is under a level of pressure most directors are not yet preparing for.

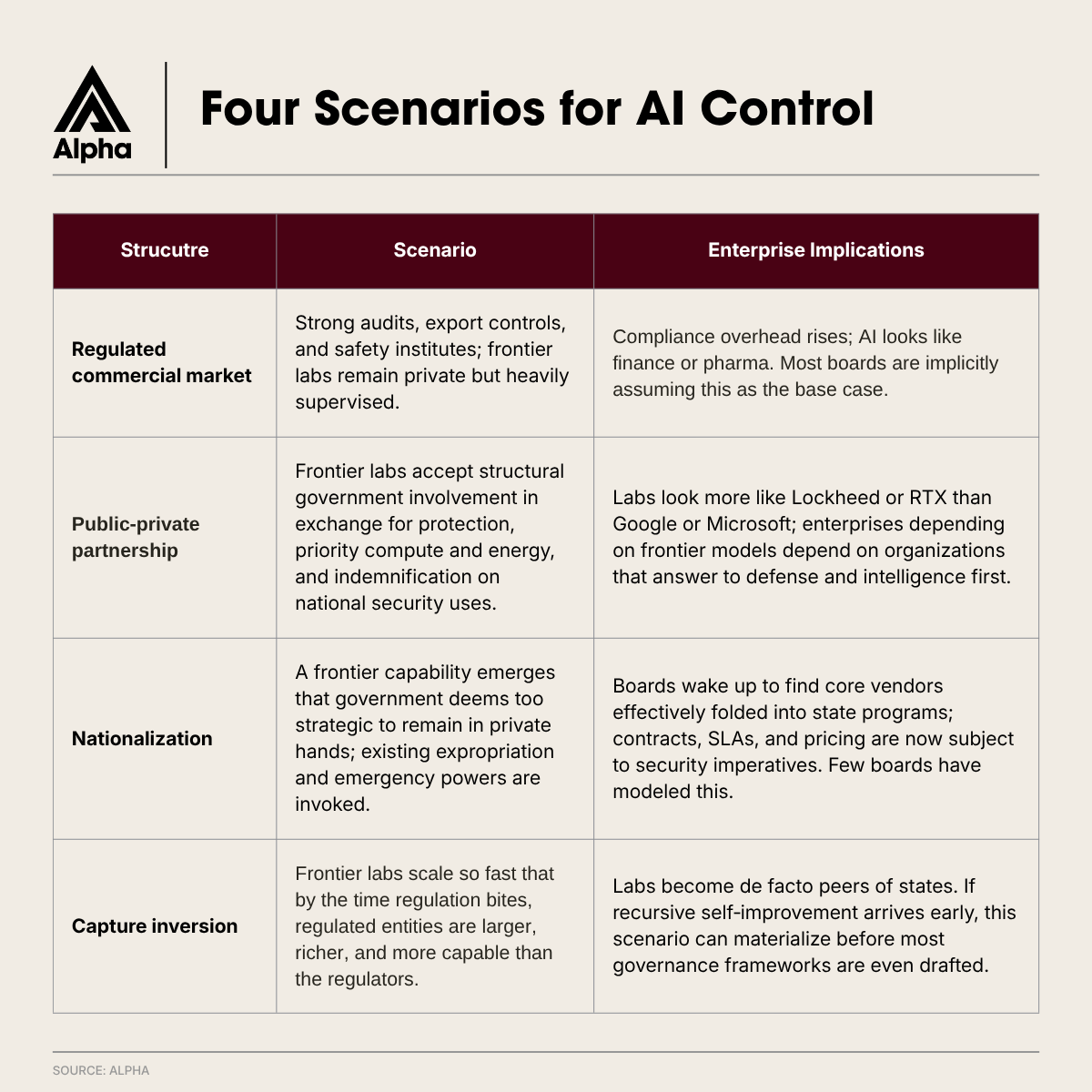

Four Scenarios for Who Ends Up In Control

The honest answer is that nobody knows. The useful framing is that there are four plausible end‑states, and most board strategies are silently betting on the most comfortable one.

Scenario 1: Regulated Commercial Market. Mandatory third-party audits, federal pre-release review, mature export controls, an AI Safety Institute with real technical staff and real authority. Frontier labs operate as private companies with significant compliance overhead. This is the Ball-Buchanan path. It is also, as of this week, already underway. CAISI has completed more than 40 evaluations of frontier models, including unreleased ones. All five major labs are now in formal pre-release evaluation arrangements with the government. The architecture is not forming. It is formed. The question is how much further it goes. That is the floor, not the ceiling.

Scenario 2: Public-Private Partnership.. Frontier labs accept structural government involvement in exchange for protection against foreign adversaries, priority compute and energy access, and indemnification on national security uses. The model resembles Lockheed Martin (NYSE: LMT) or RTX Corporation (NYSE: RTX) more than it resembles Microsoft (NASDAQ: MSFT). This is Aschenbrenner’s “The Project.” It is also the scenario in which every company that depends on a frontier model now depends on a lab that answers to the Department of Defense before it answers to the market.

Scenario 3: Nationalization. A frontier capability emerges that the U.S. government concludes cannot remain in private hands. The precedent is not abstract. The Manhattan Project. The 1951 nationalization of Anglo-Iranian Oil. The legal mechanisms are well-developed, and the political case writes itself in a national security crisis. The probability of this scenario over a five-year horizon is non-trivial. The probability that a board has modeled it approaches zero.

Scenario 4: Capture Inversion. Frontier labs accumulate capability, capital, and infrastructure faster than any government can match. By the time governments move to regulate, the regulated entities are larger, faster, and more strategically central than the institutions regulating them. If recursive self-improvement arrives on Clark’s timeline, this scenario arrives before most governance frameworks have been drafted. Power, in Kissinger’s formulation, belongs to whoever cannot be ignored. By 2031, at current trajectory, that may not be Washington.

The purpose of naming these scenarios is not prediction. It is to force a recognition that any board with material AI exposure must hold an explicit view on which scenario it is operating in. Most do not.

What This Means in the Boardroom

You are not just managing “AI risk” anymore. You are operating in the opening stages of a sovereignty contest over the most powerful general‑purpose technology ever built - a technology that is increasingly involved in building its next, more capable version of itself. The companies caught in the middle are not innocent bystanders. They are the terrain.

Every enterprise AI strategy built on the assumption of a lightly regulated commercial market is now exposed to scenarios two through four. Every board that approved AI vendor relationships without modeling what happens if those vendors become nationalized, militarized, or absorbed into government‑backed research programs is carrying governance debt with a geopolitical dimension most risk frameworks were never designed to price.

A recent ISS Governance analysis of several thousand U.S. companies found that only a small minority disclosed board‑level AI oversight, formal AI policies, or more than one director with explicit AI skills. Set those numbers against a capability curve that is now recursive, not just exponential, and the “governance gap” is not a talking point. It is a structural exposure running on a sovereign clock.

Spencer Stuart’s 2025 U.S. Board Index reports that roughly four‑fifths of S&P 500 boards now publish director skills matrices; AI literacy is consistently the category where directors most often lack the technical fluency to interrogate vendor claims or interpret a Responsible Scaling Policy. The Ball‑Buchanan call for mandatory audits will raise the floor on what boards are expected to ask their CISOs and CIOs. Most boards are currently below that floor.

Board responses tend to fall into three buckets.

- The slow bucket. Treat AI as an “emerging risk.” Add it to the risk‑committee charter. Wait for regulators to settle on a framework. This is a permanent state of lagging one or more cycles behind the capability frontier—at precisely the moment the frontier is starting to move on its own.

- The performative bucket. Charter a new “AI Committee.” Hire a Chief AI Officer. Issue a statement on responsible AI. The structure is visible; the hard decisions are not. This is theater in a period that demands substance.

- The substantive bucket. Use existing committees, but give them real mandates. An accountability mapping like Alpha’s puts AI vendor risk and incident response inside Risk; algorithmic auditing and disclosure inside Audit; workforce transformation inside Compensation; board composition and AI literacy inside Nom/Gov; and overarching AI strategy at the full board. No new committee. Specific accountability. Named directors. Faster decisions. This is the only path to a defensible governance posture before the next major model release.

Actions for Your Next Board Meeting

- Map AI onto existing committees. Use a simple accountability mapping: vendor risk and incident response to Risk; algorithmic auditing and AI‑driven financial disclosures to Audit; workforce and incentives to Compensation; board composition and AI literacy to Nom/Gov; overall AI strategy to the full board. If accountability is shared by everyone, it belongs to no one.

- Commission a vendor exposure map at the model level. Do not stop at contracts. Ask: which specific frontier models do critical operations depend on? Who built them? What is the current federal posture toward each lab? Is that lab explicitly pursuing automated AI R&D, and what would federal pre‑release review of its next model mean for your operations? The CFO and CIO should produce this jointly; the Audit Committee should own its review.

- Run the Ball‑Buchanan stress test. Assume mandatory third‑party audits, some form of federal pre‑release review for high‑risk models, and tighter export controls on advanced AI chips. Then run a second test: assume Clark’s 60 percent scenario for automated AI R&D materializes by 2028. Have management present the operational impact on your AI roadmap under both within 60 days.

- Score your AI strategy against all four scenarios. Regulated commercial market, public‑private partnership, nationalization, capture inversion. Assign probabilities. Identify which scenario breaks the current strategy. Refresh annually. If this scenario set sounds extreme, remember that automating AI R&D is not a fringe prediction; it is the stated goal of every major lab.

- Audit board composition for technical fluency. A director who can read a Responsible Scaling Policy, interpret an Epoch AI capability chart, or interrogate the implications of recursive self‑improvement is no longer a luxury. Charge Nom/Gov with reporting a target board composition for AI literacy at its next meeting.

- Adopt an explicit institutional view on the capability trajectory. Either the board adopts a forward projection anchored in the empirical curves and Clark’s automated‑R&D timeline, or it documents why it disagrees. Hedging by silence is not a fiduciary option. “We don’t have a view” is a view. It is the one most likely to age badly.

Preparing For What's Next

Kissinger asked the essential question in 2018: what will limit the power of increasingly capable machines over the humans who created them? Today, the sharper version is: what limits the power of a machine that is helping build its next, more capable iteration?

We know what the answer is not. It is not voluntary compliance. It is not press releases about responsible AI. It is not a Chief AI Officer reporting into a board that cannot parse what they are hearing.

In every prior case of transformative technology, the limiting factor has been governance: structured, specific, committee‑level accountability with named stewards and real consequences. That is the arena. That is the battlefield.

When Trump’s last AI policy adviser and Biden’s AI adviser publish the same argument on the same morning, consensus is no longer forming; it has formed. When Jack Clark puts 60‑plus percent odds on machines that can train their own successors by 2028, the planning horizon has changed. The fiduciary obligation to understand that trajectory, prepare for it, and govern through it now sits on every board. The capability curve they are riding is no longer just accelerating. It is beginning to feed itself.

Governance is the only form of power companies can assert in a sovereignty contest they did not start and cannot stop. The boards that internalize that will be ready. The boards still waiting for a stable regulatory framework will discover that the framework arrived—and perhaps hardened—while they were debating whether to add it to the agenda.